I have a workflow split into 2 separate ones. Workflow 1 calls Workflow 2 after each batch (batch size is 1). Essentially it is fetching a URL from a Google Sheet one at at time (Workflow 1) then running some functions on the HTTP response and saving it to MongoDB (Workflow 2).

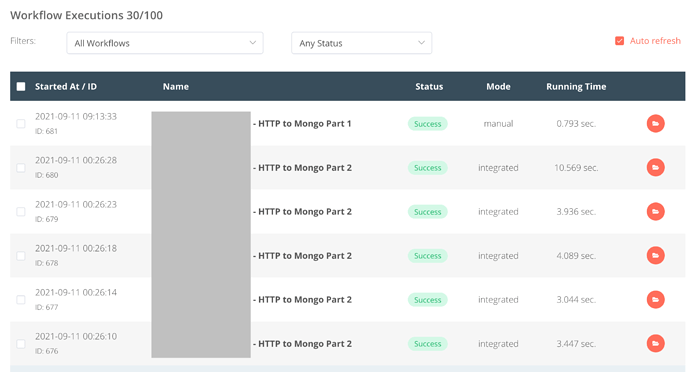

However, it only runs for 10-50 items and then randomly stops. My execution history does not show any incomplete or errored executions:

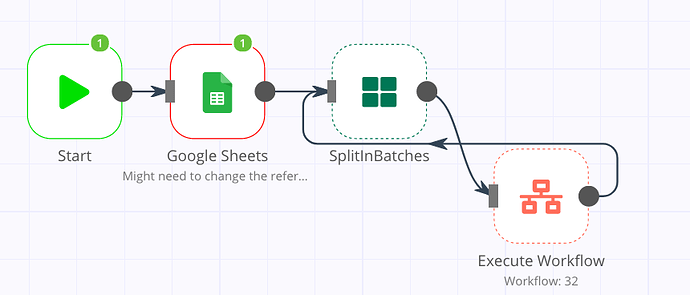

And from the execution history diagram of Workflow 1 it seem that the Split Into Batches node is not activating correct (no green number icon to show it has executed):

Container Logs are not timestamped but show the following (the memory errors may be related to a previous issue before the workflows were separated):

The session "lvlkfvtx59" is not registred.

The session "lvlkfvtx59" is not registred.

<--- Last few GCs --->

[23:0x55e79d28df80] 248863 ms: Scavenge 464.3 (486.3) -> 463.8 (490.3) MB, 13.7 / 0.0 ms (average mu = 0.566, current mu = 0.402) allocation failure

[23:0x55e79d28df80] 248899 ms: Scavenge 467.7 (490.3) -> 467.8 (490.3) MB, 4.4 / 0.0 ms (average mu = 0.566, current mu = 0.402) allocation failure

[23:0x55e79d28df80] 248907 ms: Scavenge 467.8 (490.3) -> 467.3 (494.3) MB, 8.9 / 0.0 ms (average mu = 0.566, current mu = 0.402) allocation failure

<--- JS stacktrace --->

FATAL ERROR: MarkCompactCollector: young object promotion failed Allocation failed - JavaScript heap out of memory

The session "lvlkfvtx59" is not registred.