I’ve created a workflow querying username info to the Twitter API. There are 2 options:

(1) Query the API 1 user at a time

(2) Query the API with up to 100 users, in a comma separated list, at a time

Option 2 is obviously the more efficient, and will help me not hit API limits as quickly.

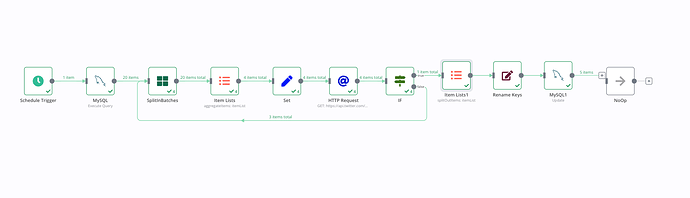

So I’ve set up the following Workflow to query the Twitter usernames from MySQL, SplitInBatches of 100 each, aggregate the username fields, set the usernames as a comma separated list, GET from the API, remove “data” from the response so the keys can be renamed, and finally update MySQL with the data received from the Twitter API:

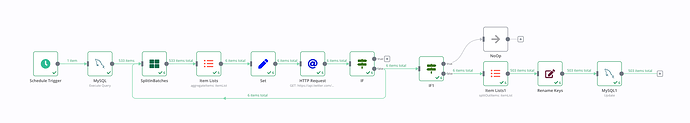

This works until we reach about 200-300 items returned from the 1st MySQL step, which is 2-3 batches. Then it hangs, crashes in the browser, I can’t view the execution, etc.

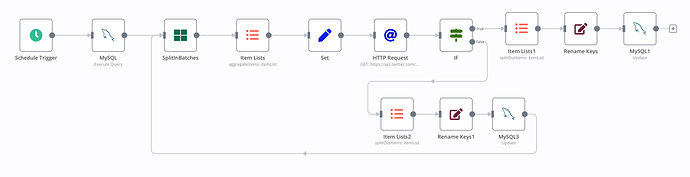

As an alternative, I created this, which uses option (1), above. It works flawlessly with as many as 600 items returned from the 1st MySQL step (that’s the size of my list, so I can’t test bigger numbers).

But, this approach has the disadvantage of eating up my API limits very quickly.

I have replicated the problem in both the cloud and self-hosted n8n, so I don’t believe it’s an issue with server load or something similar. I was told via email support that version 0.201.0 solved this, but it did not. Email support asked me to let them know if I was still having trouble with this issue after upgrading to 0.201.0 in the cloud, which I did, to which they replied I needed to come to the forums, so here I am.

I would very much like to be able to run this with option 1 - getting 100 users at a time. Any ideas on how to make it work, and not crash, would be appreciated.