Describe the problem/error/question

the tool in query body called by agent

{

"type": "function",

"function": {

"name": "get_start_skill",

"description": "方法说明:加载指定的Skill",

"parameters": {

"type": "object",

"properties": {

"skill_name": {

"type": "string",

"description": "Skill的名称"

}

},

"required": [

"skill_name"

],

"additionalProperties": false,

"$schema": "http://json-schema.org/draft-07/schema#"

}

}

}

the fuction call in llm response is

{

"tool_calls": [

{

"index": 0,

"id": "get_start_skill:0",

"type": "function",

"function": {

"name": "get_start_skill",

"arguments": "{\"skill_name\":\"端口调峰\"}"

}

}

]

}

but after mcptool called and agent(version 3) response is

[

{

"role": "assistant",

"content": "",

"tool_calls": [

{

"id": "get_start_skill:0",

"type": "function",

"function": {

"name": "query_rag",

"arguments": "{\"skill_name\":\"端口调峰\",\"tool\":\"get_start_skill\",\"id\":\"get_start_skill: 0\"}"

}

}

]

},

{

"role": "tool",

"content": "[{\"response\":[{\"type\":\"text\",\"text\":\"{\\\"error_info\\\":\\\"\\\",\\\"data\\\":\\\"aaa\"}\"}]}]", \"tool_call_id\": \"get_start_skill:0\"

}

]

the request would make llm response same tool call agent

I change aiagent node to version 2.2,the response is

[

{

"role": "assistant",

"content": "",

"tool_calls": [

{

"id": "get_start_skill:0",

"type": "function",

"function": {

"name": "get_start_skill",

"arguments": "{\"skill_name\":\"端口调峰\"}"

}

}

]

},

{

"role": "tool",

"content": "[{\"type\":\"text\",\"text\":\"{\\\"error_info\\\":\\\"\\\",\\\"data\\\":\\\"aaa\\\"}\"}]",

"tool_call_id": "get_start_skill:0"

}

]

It appears that after aiagent version 3 calls an mcp tool through the mcpclient tool, there is multiple layers of nesting in the subsequent request. This causes the LLM to fail in recognizing that the agent has called the tool as required (because the function_name in the nested structure doesn’t match the one in the tool_list), leading it to respond again as if it were the previous tool call

What is the error message (if any)?

aiagent-version3 always called same tool because llm always responds the tool_call:get_start_skill

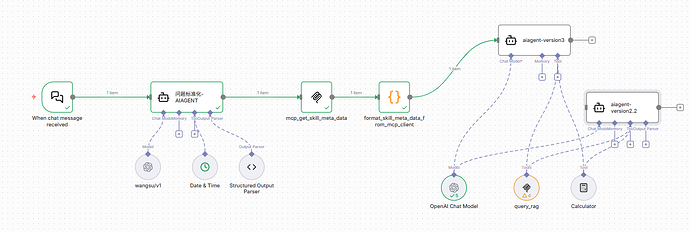

Please share your workflow

(Select the nodes on your canvas and use the keyboard shortcuts CMD+C/CTRL+C and CMD+V/CTRL+V to copy and paste the workflow.)

Share the output returned by the last node

Information on your n8n setup

- n8n version: 1.123.20

- Database (default: SQLite):

- n8n EXECUTIONS_PROCESS setting (default: own, main):

- Running n8n via (Docker, npm, n8n cloud, desktop app):

- Operating system: