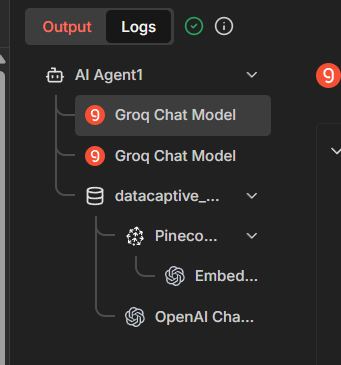

Trying to create a RAG Chatbot, but somehow AI Node isn’t using Tool response to form the reply at all

When checked in logs, it’s showing the following output in AI Agent (generally, there should be one more AI Model call after Pinecone Vector DB response, but somehow it’s sending response from Groq before the tool call (tried with 4o-mini, and 4.1-mini as well - and it’s stll the same)

Please share your workflow

(Select the nodes on your canvas and use the keyboard shortcuts CMD+C/CTRL+C and CMD+V/CTRL+V to copy and paste the workflow.)

{

"nodes": [

{

"parameters": {

"toolDescription": "Use this tool to escalate the chat to human agent or when chat user wants to talk with human",

"method": "POST",

"url": "=https://chat.datacaptive.com/api/v1/accounts/{{ $('Switch').first().json.account_id }}/conversations/{{ $('Switch').first().json.conversation_id }}/assignments",

"sendHeaders": true,

"headerParameters": {

"parameters": [

{

"name": "api_access_token",

"value": "uGpgSTU9TjgijPpxs5THKH9S"

},

{

"name": "Content-Type",

"value": "application/json"

}

]

},

"sendBody": true,

"bodyParameters": {

"parameters": [

{

"name": "assignee_id",

"value": "={{ $json.assignee_id }}"

}

]

},

"options": {}

},

"type": "n8n-nodes-base.httpRequestTool",

"typeVersion": 4.3,

"position": [

1680,

-1488

],

"id": "ebdc9659-7419-409d-a29b-ddc3455a3dd1",

"name": "escalate_chat1"

},

{

"parameters": {

"options": {}

},

"type": "@n8n/n8n-nodes-langchain.embeddingsOpenAi",

"typeVersion": 1.2,

"position": [

2016,

-1104

],

"id": "c349dfd8-3182-45e5-b7c6-d08e981c7e10",

"name": "Embeddings OpenAI2",

"credentials": {

"openAiApi": {

"id": "f1zsMaki0s8Z0rBp",

"name": "cold outreach"

}

}

},

{

"parameters": {

"sendTo": "[email protected]",

"subject": "={{ /*n8n-auto-generated-fromAI-override*/ $fromAI('Subject', ``, 'string') }}",

"emailType": "text",

"message": "={{ /*n8n-auto-generated-fromAI-override*/ $fromAI('Message', ``, 'string') }}",

"options": {

"appendAttribution": false

}

},

"type": "n8n-nodes-base.gmailTool",

"typeVersion": 2.2,

"position": [

1808,

-1488

],

"id": "bf2a8bba-9c92-469e-9ef9-fe94e5f07762",

"name": "internal_team_email1",

"webhookId": "ee4373f8-abc9-415c-9197-beee5a6fa7fd",

"credentials": {

"gmailOAuth2": {

"id": "i5IpQUiaHUnmBpQZ",

"name": "amelia.williams - G Suite"

}

}

},

{

"parameters": {

"promptType": "define",

"text": "={{ $json.chatInput }}",

"options": {

"systemMessage": "=You are an AI assistant specialized in providing answers to user queries, collecting leads, and escalating to human agent when required. You primary task is to answer questions accuratly and precisely using Vector database, which contains relevant document (datacaptive_source_of_truth).\n\nOnly provide information that you retrive from the documents (or verified expert knowledge). If something is not included in the dataset or is unclear, clearly state that you do not have sufficicent information.\n\nStructure your responses:\n\n- Concise and to the point\n- Specific numbers and facts, when available\n- Clearly indicate which quarterly report (Q4 or Q4) the information comes from\n\nObjective:\nProvide users with reliable and quick details on DataCaptive Services using Vector Database"

}

},

"type": "@n8n/n8n-nodes-langchain.agent",

"typeVersion": 3.1,

"position": [

1616,

-1712

],

"id": "b5c19477-613b-42c7-882d-d8732d91046f",

"name": "AI Agent1"

},

{

"parameters": {

"pineconeIndex": {

"__rl": true,

"value": "datacaptivechatbot",

"mode": "list",

"cachedResultName": "datacaptivechatbot"

},

"options": {

"pineconeNamespace": "datacaptive_faqs"

}

},

"type": "@n8n/n8n-nodes-langchain.vectorStorePinecone",

"typeVersion": 1.3,

"position": [

1904,

-1344

],

"id": "40770c25-55ee-4836-b767-f178301bd6a6",

"name": "Pinecone Vector Store2",

"credentials": {

"pineconeApi": {

"id": "5RZn3AB32Lx0losC",

"name": "PineconeApi account"

}

}

},

{

"parameters": {

"model": {

"__rl": true,

"value": "gpt-4.1-mini",

"mode": "list",

"cachedResultName": "gpt-4.1-mini"

},

"builtInTools": {},

"options": {}

},

"type": "@n8n/n8n-nodes-langchain.lmChatOpenAi",

"typeVersion": 1.3,

"position": [

2224,

-1312

],

"id": "c306df86-712a-4c9d-844c-c97ec850faee",

"name": "OpenAI Chat Model2",

"credentials": {

"openAiApi": {

"id": "f1zsMaki0s8Z0rBp",

"name": "cold outreach"

}

}

},

{

"parameters": {

"description": "Use this tool for ANY question about DataCaptive — services, pricing, contact details, WhatsApp, phone numbers, emails, or company info. The text returned by this tool IS the final answer. Copy it directly into your response. Do not say you lack information if this tool returns any text."

},

"type": "@n8n/n8n-nodes-langchain.toolVectorStore",

"typeVersion": 1.1,

"position": [

2032,

-1520

],

"id": "ff5d20a7-563a-4aa6-bf0b-9cad52ce3f8e",

"name": "datacaptive_source_of_truth1",

"rewireOutputLogTo": "ai_tool"

},

{

"parameters": {

"options": {

"responseMode": "streaming"

}

},

"type": "@n8n/n8n-nodes-langchain.chatTrigger",

"typeVersion": 1.4,

"position": [

1392,

-1712

],

"id": "24c9ece3-a94f-4c99-8f09-ac16b4f96f52",

"name": "When chat message received",

"webhookId": "1de07b27-cf36-4723-a399-2aadd1bff896"

},

{

"parameters": {

"model": "openai/gpt-oss-120b",

"options": {}

},

"type": "@n8n/n8n-nodes-langchain.lmChatGroq",

"typeVersion": 1,

"position": [

1488,

-1504

],

"id": "58e91dc4-413f-437d-a3bb-1af68a6dc6d3",

"name": "Groq Chat Model",

"credentials": {

"groqApi": {

"id": "QMvQ7K9jrPZeYrr7",

"name": "Mohan - test"

}

}

}

],

"connections": {

"escalate_chat1": {

"ai_tool": [

[

{

"node": "AI Agent1",

"type": "ai_tool",

"index": 0

}

]

]

},

"Embeddings OpenAI2": {

"ai_embedding": [

[

{

"node": "Pinecone Vector Store2",

"type": "ai_embedding",

"index": 0

}

]

]

},

"internal_team_email1": {

"ai_tool": [

[

{

"node": "AI Agent1",

"type": "ai_tool",

"index": 0

}

]

]

},

"Pinecone Vector Store2": {

"ai_vectorStore": [

[

{

"node": "datacaptive_source_of_truth1",

"type": "ai_vectorStore",

"index": 0

}

]

]

},

"OpenAI Chat Model2": {

"ai_languageModel": [

[

{

"node": "datacaptive_source_of_truth1",

"type": "ai_languageModel",

"index": 0

}

]

]

},

"datacaptive_source_of_truth1": {

"ai_tool": [

[

{

"node": "AI Agent1",

"type": "ai_tool",

"index": 0

}

]

]

},

"When chat message received": {

"main": [

[

{

"node": "AI Agent1",

"type": "main",

"index": 0

}

]

]

},

"Groq Chat Model": {

"ai_languageModel": [

[

{

"node": "AI Agent1",

"type": "ai_languageModel",

"index": 0

}

]

]

}

},

"pinData": {

"internal_team_email1": [

{

"id": "19d3097fe691c366",

"threadId": "19d3097fe691c366",

"labelIds": [

"SENT"

]

}

]

},

"meta": {

"instanceId": "8d93ef0be34f9c124d5dd7b0d5c0ff15a59f0997cf7850da390ce35c4f4501b7"

}

}

Share the output returned by the last node

Information on your n8n setup

- n8n version:

- Database (default: SQLite):

- n8n EXECUTIONS_PROCESS setting (default: own, main):

- Running n8n via (Docker, npm, n8n cloud, desktop app): Docker

- Operating system: