I have a problem with a 40k+ row CSV file…

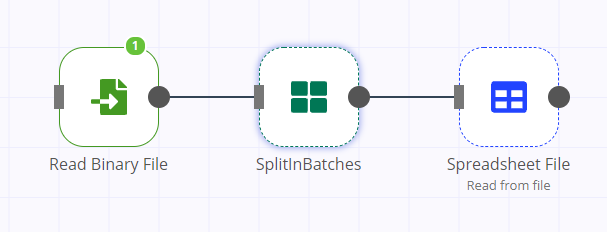

my setup is quite simple;

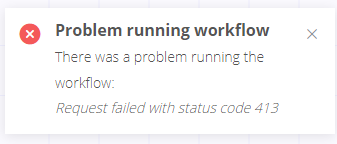

I can read the file, but when I tried to read it into Spreadsheet file node, it gave an error, I’ve read the forums and saw about payload too large issues, so I decided to split it before sending it to Spreadsheet node…

but I get the same error with SplitInBatches node now, is that normal? Is there no way to read such a CSV file without shrinking the original file size externally?

I am running on Ubuntu, without Docker.