Is there a way to add a customer header to the Azure OpenAI Chat Model? I am using an “internal” version of OpenAI which requires a specific header on the API requests to track usage. Otherwise it fails as “BadRequest”.

I think the code in question is located here: n8n/packages/@n8n/nodes-langchain/nodes/llms/LmChatAzureOpenAi at b21140180e32c335ad0392adf618d33ba05e2838 · n8n-io/n8n · GitHub

and it constructs the model proxy (AzureChatOpenAI)

// Create and return the model

const model = new AzureChatOpenAI({

azureOpenAIApiDeploymentName: modelName,

...modelConfig,

...options,

timeout: options.timeout ?? 60000,

maxRetries: options.maxRetries ?? 2,

callbacks: [new N8nLlmTracing(this)],

configuration: {

fetchOptions: {

dispatcher: getProxyAgent(),

},

},

modelKwargs: options.responseFormat

? {

response_format: { type: options.responseFormat },

}

: undefined,

onFailedAttempt: makeN8nLlmFailedAttemptHandler(this),

});

So I think that is this class: langchainjs/libs/providers/langchain-openai/src/azure/chat_models.ts at 1d95681731d5c09682350b84f6cbf1b6906f6761 · langchain-ai/langchainjs · GitHub py which looks like it supports headers

const defaultHeaders = normalizeHeaders(params.defaultHeaders);

params.defaultHeaders = {

...params.defaultHeaders,

"User-Agent": defaultHeaders["User-Agent"]

? `langchainjs-azure-openai/2.0.0 (${env})${defaultHeaders["User-Agent"]}`

: `langchainjs-azure-openai/2.0.0 (${env})`,

};

Linking the documentation which does discuss custom headers:

const llmWithCustomHeaders = new AzureChatOpenAI({

azureOpenAIApiKey: process.env.AZURE_OPENAI_API_KEY, // In Node.js defaults to process.env.AZURE_OPENAI_API_KEY

azureOpenAIApiInstanceName: process.env.AZURE_OPENAI_API_INSTANCE_NAME, // In Node.js defaults to process.env.AZURE_OPENAI_API_INSTANCE_NAME

azureOpenAIApiDeploymentName: process.env.AZURE_OPENAI_API_DEPLOYMENT_NAME, // In Node.js defaults to process.env.AZURE_OPENAI_API_DEPLOYMENT_NAME

azureOpenAIApiVersion: process.env.AZURE_OPENAI_API_VERSION, // In Node.js defaults to process.env.AZURE_OPENAI_API_VERSION

configuration: {

defaultHeaders: {

"x-custom-header": `SOME_VALUE`,

},

},

});

I am guessing you could take the header bits from n8n/packages/nodes-base/nodes/HttpRequest/V3/Description.ts:

and paste them LmChatAzureOpenAi/properties: n8n/packages/@n8n/nodes-langchain/nodes/llms/LmChatAzureOpenAi/properties.ts:

and refernce them from LmChatAzureOpenAi:

but I really don’t know - just guessing at this a bit. Would love some guidance here.

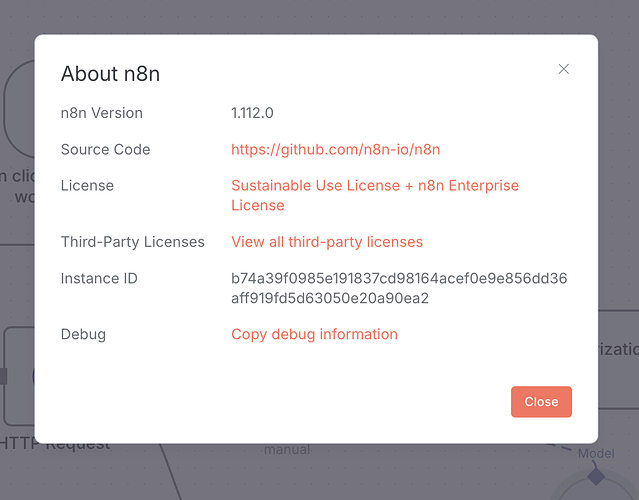

So i installed 1.112.0

npm install -g [email protected]

because I was pointed towards this feat(OpenAI Node): Support custom headers for model requests by mgosal · Pull Request #17835 · n8n-io/n8n · GitHub

feat(OpenAI Node): Support custom headers for model requests

github-actions bot mentioned this pull request on Sep 15

And confirmed it:

I guess maybe I am confusing AzureOpenAi and OpenAI in this ecosystem… maybe? @mgosal @idirouhab any chance you can weigh in here?

Hey, Todd!

Unfortunately custom header is only supported by OpenAI Chat Model node. You can still use this node for OpenAI-compatible API providers by overriding base URL in OpenAI credentials.

But as I understand your internal deployment is on Azure, and Azure connection is a bit different than regular OpenAI-compatible APIs, thus requires using Azure OpenAI Chat Model (and underlying AzureChatOpenAI class from langchain).

If you use self-hosted n8n – you can try using LangChain code node as a workaround. This node allows you to write custom implementations for AI nodes, including models.

Here’s a brief example to get started: