Hi everyone,

I am using n8n for the first time. I am creating a local RAG chat agent at my company to retrieve information from our internal knowledge base.

I have deployed n8n locally through the self hosted starter kit, along with Qdrant, Postgre, and Open WebUI that I added to the docker compose file.

To start with, I am trying to load a few PDF documents, parse, split and vectorize them. Those PDF files are stored on my computer.

Below is the first workflow I created, which seems to be the most intuitive one. I am using the « Read/Write Files from Disk » node to read all PDFs documents on my local folder, then the « Extract from File (From PDF) » to parse them. The « Default Data Loader » « Type of Data » parameter is set to « JSON ». Doing it this way, all the documents metadata are considered as text and vectorized, as shown in the screenshot below.

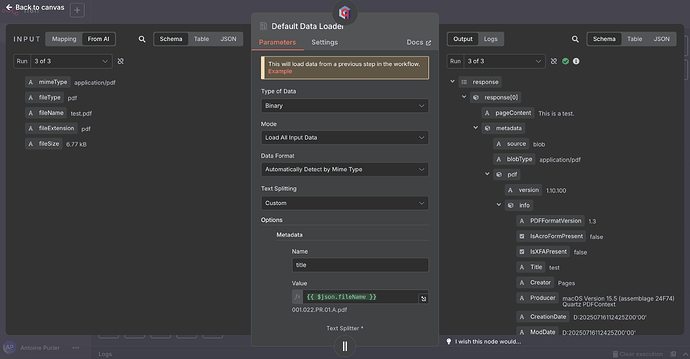

I then tried to get rid of the « Extract from File (From PDF) » node and setting the « Default Data Loader » « Type of Data » parameter to « Binary ». This other workflow is shown below.

I also added a metadata option to have a « title » metadata with the « fileName » output of the « Read/Write Files from Disk » taken as value (see first screenshot below).

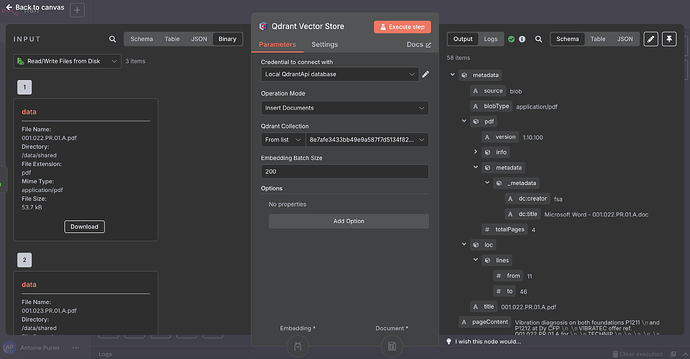

Doing so, my metadata are no longer considered as text and thus vectorized. I can see my « title » metadata as a first level metadata, as shown in the second screenshot below. But I still have a lot of other metadata fields and subfields. I would prefer to have the hand on which metadata I keep exactly.

I would like to know which workflow/solution is best in my case to ingest and vectorize my PDF documents. I would have said the first one intuitively but I don’t want to have metadata as vectors. What is the « Extract from PDF » used for then?

Is the second workflow better? And how can I tidy up this metadata mess?

Thanks a lot in advance

Cheers

Antoine