Describe the problem/error/question

I am building a workflow to process uploaded meeting audio files:

-

The user uploads an audio file via a Webhook node (binary data is present and visible in the node’s output as the key data).

-

In the next Code node, I rename the binary key from data to audio.

-

However, in the following Code node, $binary.audio is undefined, and I get an error that no binary data is found.

-

All Code nodes are set to “Run Once for Each Item” and return a single object (not an array).

-

There are no IF, Merge, or Split nodes between the Webhook and the Code nodes.

What is the error message (if any)?

No binary data found in input. Check previous node output.

No binary "audio" found. Please check the previous node.

Please share your workflow

(Select the nodes on your canvas and use the keyboard shortcuts CMD+C/CTRL+C and CMD+V/CTRL+V to copy and paste the workflow.)

{

"nodes": [

{

"parameters": {

"httpMethod": "POST",

"path": "meeting/upload",

"responseMode": "responseNode",

"options": {

"binaryData": true

}

},

"id": "facab62c-c379-4577-b13e-bf82af3a1616",

"name": "Webhook: Upload (POST /meeting/upload)",

"type": "n8n-nodes-base.webhook",

"typeVersion": 1,

"position": [

832,

-1552

],

"webhookId": "c4287f5b-fa93-4500-90b5-8713420b6af3"

},

{

"parameters": {

"conditions": {

"options": {

"caseSensitive": true,

"leftValue": "",

"typeValidation": "strict",

"version": 2

},

"conditions": [

{

"id": "6d3b179a-2ff8-4b65-b550-b395784104c9",

"leftValue": "={{ $json.error || '' }}",

"rightValue": "",

"operator": {

"type": "string",

"operation": "empty",

"singleValue": true

}

}

],

"combinator": "and"

},

"options": {}

},

"type": "n8n-nodes-base.if",

"typeVersion": 2.2,

"position": [

1488,

-1200

],

"id": "acbbbdd3-469d-4c26-9763-95d2f77697f7",

"name": "IF: has error?"

},

{

"parameters": {

"respondWith": "text",

"responseBody": "={{$json.message || 'Bad Request'}}",

"options": {

"responseCode": 400,

"responseHeaders": {

"entries": [

{

"name": "Content-Type",

"value": "text/plain; charset=utf-8"

}

]

}

}

},

"type": "n8n-nodes-base.respondToWebhook",

"typeVersion": 1.4,

"position": [

1744,

-1008

],

"id": "4673ea9d-c7f5-4ae1-9573-2a2a013b3d88",

"name": "Respond: 400 Bad Request"

},

{

"parameters": {

"jsCode": "/**\n * 목적\n * - 전사 텍스트를 workflow static data에 파트별로 임시 저장\n * - 단어 수 계산 추가\n * - TTL 추가: 1일 경과 시 자동 삭제\n */\n\nfunction textFromItem(j) {\n if (Array.isArray(j.segments)) {\n return j.segments.map(s => (s.text || '').trim()).join(' ').trim();\n }\n return (j.text || j.fullText || '').toString();\n}\n\nconst j = $json;\nconst group_id = j.group_id;\nconst part_index = Number(j.part_index ?? 0);\nconst email = j.worker_email || j._email || '';\nconst partText = textFromItem(j);\n\nif (!group_id) return [{ json: { error: 'missing group_id' } }];\nif (!email) return [{ json: { error: 'missing email' } }];\nif (!partText) return [{ json: { error: 'empty part text' } }];\n\nconst wordCount = partText.split(/\\s+/).filter(word => word.length > 0).length; // 띄어쓰기로 단어 수 계산\n\nconst store = this.getWorkflowStaticData('global');\nif (!store.transcripts) store.transcripts = {};\nconst slot = store.transcripts[group_id] || { parts: {}, email, createdAt: Date.now(), totalWords: 0 };\nslot.email = slot.email || email;\nslot.parts[part_index] = partText;\nslot.totalWords += wordCount; // 부분 단어 수 누적\n\nif (Date.now() - slot.createdAt > 86400000) {\n delete store.transcripts[group_id];\n return [{ json: { error: 'TTL expired for group_id: ' + group_id } }];\n}\n\nstore.transcripts[group_id] = slot;\n\nreturn [{\n json: {\n ...j,\n _stored: true,\n _debug: { keys: Object.keys(slot.parts).map(k=>Number(k)).sort((a,b)=>a-b), email: slot.email, wordCount },\n totalWords: slot.totalWords // 전체 단어 수\n }\n}];"

},

"type": "n8n-nodes-base.code",

"typeVersion": 2,

"position": [

2400,

-1568

],

"id": "f3e72549-61a9-49de-8ca8-9772db30984e",

"name": "Code: Static Upsert Part"

},

{

"parameters": {

"mode": "runOnceForEachItem",

"jsCode": "const binaryKeys = Object.keys($binary || {});\nif (binaryKeys.length === 0) {\n throw new Error('No binary data found in webhook payload. Check if the file was uploaded and the Webhook node has \"Binary Data\" enabled.');\n}\nconst firstBinaryKey = binaryKeys[0];\nconst firstBinaryData = $binary[firstBinaryKey];\nreturn {\n json: {\n fileInfo: firstBinaryData,\n log: binaryKeys,\n firstBinaryKey,\n firstBinaryData,\n ...$json\n },\n binary: {\n audio: firstBinaryData\n }\n};"

},

"type": "n8n-nodes-base.code",

"typeVersion": 2,

"position": [

1056,

-1552

],

"id": "d73b27f2-d74d-49fb-9858-89bb11fd1a4c",

"name": "Code: Find binary data"

},

{

"parameters": {

"mode": "runOnceForEachItem",

"jsCode": "if (!$binary || !Object.keys($binary).length) {\n throw new Error('No binary data found in input. Check previous node output.');\n}\n\nconst bin = $binary || {};\nconst audio = bin.audio;\nif (!audio) {\n throw new Error('No binary \"audio\" found. Please check the previous node.');\n}\n\n// 확장자/타입 보정 로직 (예시)\nconst OK_EXTS = ['flac','m4a','mp3','mp4','mpeg','mpga','oga','ogg','wav','webm'];\nfunction splitName(fname) {\n if (!fname) return { base: 'audio', ext: '' };\n const i = fname.lastIndexOf('.');\n if (i < 0) return { base: fname, ext: '' };\n return { base: fname.slice(0, i), ext: fname.slice(i + 1).toLowerCase() };\n}\nfunction extFromMime(m) {\n if (!m) return null;\n m = m.toLowerCase();\n if (m.includes('webm')) return 'webm';\n if (m.includes('mp4')) return 'mp4';\n if (m.includes('mpeg') || m.includes('mp3') || m.includes('mpga')) return 'mp3';\n if (m.includes('wav')) return 'wav';\n if (m.includes('ogg') || m.includes('oga')) return 'ogg';\n if (m.includes('flac')) return 'flac';\n if (m.includes('m4a')) return 'm4a';\n return null;\n}\nfunction canonicalMime(ext) {\n switch (ext) {\n case 'webm': return 'audio/webm';\n case 'mp4': return 'audio/mp4';\n case 'mp3':\n case 'mpeg':\n case 'mpga': return 'audio/mpeg';\n case 'wav': return 'audio/wav';\n case 'ogg':\n case 'oga': return 'audio/ogg';\n case 'flac': return 'audio/flac';\n case 'm4a': return 'audio/m4a';\n default: return audio.mimeType || 'application/octet-stream';\n }\n}\nconst { base, ext } = splitName(audio.fileName || '');\nlet finalExt = ext;\nif (!finalExt || !OK_EXTS.includes(finalExt)) {\n finalExt = extFromMime(audio.mimeType) || 'webm';\n}\naudio.fileName = `${base || 'audio'}.${finalExt}`;\naudio.mimeType = canonicalMime(finalExt);\n\nreturn [{ json: { _binKey: 'audio' }, binary: { audio } }];"

},

"type": "n8n-nodes-base.code",

"typeVersion": 2,

"position": [

1264,

-1392

],

"id": "031b96cc-ed25-48f1-a47a-57bd2a99ebdd",

"name": "Code: Validate & Pick Binary1"

},

{

"parameters": {

"method": "POST",

"url": "https://api.openai.com/v1/audio/transcriptions",

"authentication": "predefinedCredentialType",

"nodeCredentialType": "httpBearerAuth",

"sendBody": true,

"contentType": "multipart-form-data",

"bodyParameters": {

"parameters": [

{

"parameterType": "formBinaryData",

"name": "file",

"inputDataFieldName": "audio"

},

{

"name": "model",

"value": "whisper-1"

},

{

"name": "filename",

"value": "={{ $binary.audio.fileName }}"

}

]

},

"options": {}

},

"type": "n8n-nodes-base.httpRequest",

"typeVersion": 4.2,

"position": [

2160,

-1792

],

"id": "2e4a7222-95c2-4506-b543-f20032673990",

"name": "HTTP: Whisper : n8n",

"alwaysOutputData": false,

"executeOnce": false,

"retryOnFail": false,

"credentials": {

"httpBearerAuth": {

"id": "CzXJOc1jysXur2K6",

"name": "Bearer Auth account"

}

}

}

],

"connections": {

"Webhook: Upload (POST /meeting/upload)": {

"main": [

[

{

"node": "Code: Find binary data",

"type": "main",

"index": 0

}

]

]

},

"IF: has error?": {

"main": [

[

{

"node": "HTTP: Whisper : n8n",

"type": "main",

"index": 0

}

],

[

{

"node": "Respond: 400 Bad Request",

"type": "main",

"index": 0

}

]

]

},

"Code: Static Upsert Part": {

"main": [

[]

]

},

"Code: Find binary data": {

"main": [

[

{

"node": "Code: Validate & Pick Binary1",

"type": "main",

"index": 0

}

]

]

},

"Code: Validate & Pick Binary1": {

"main": [

[

{

"node": "IF: has error?",

"type": "main",

"index": 0

}

]

]

},

"HTTP: Whisper : n8n": {

"main": [

[

{

"node": "Code: Static Upsert Part",

"type": "main",

"index": 0

}

]

]

}

},

"pinData": {},

"meta": {

"templateCredsSetupCompleted": true,

"instanceId": "a254ea5216ea6cfbd8ee7fe4e961a7d4307ef34e8c99e3784178729684a073dc"

}

}

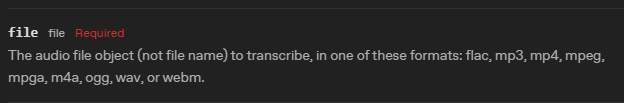

Share the output returned by the last node

The last node (Code: Validate & Pick Binary1) returns the error above.

The previous node (Code: Find binary data) is supposed to output a single item with a binary key audio, but in the next node, $binary.audio is undefined.

Information on your n8n setup

- n8n version: 1.109.1-exp.0

- Database (default: SQLite):

- n8n EXECUTIONS_PROCESS setting (default: own, main):

- Running n8n via (Docker, npm, n8n cloud, desktop app): n8n cloud

- Operating system: n8n cloud

Additional context

-

Webhook node output (binary tab):

-

Key: data

-

File name, mime type, and file size are all present and correct.

-

-

No data transformation nodes (IF, Merge, Split) between Webhook and Code nodes.

-

All Code nodes use “Run Once for Each Item” and return a single object.

I uploaded the audio file recorded in the browser to the n8n server in form-data format, and then I created a workflow like this to send it to WhisperAI via HTTP, but I don’t know how to preprocess the form-data to convert it into a format that WhisperAI can recognize. I’ve been testing this part for 3 days but haven’t been able to solve it. I need help.