![]() Hey n8n Community,

Hey n8n Community,

Over the past few months I’ve been building and publishing finance-automation workflows on n8n – receipt trackers, invoice pipelines, approval flows, a stress test, error handling, a smart mailroom. A pattern kept showing up that I didn’t expect going in, so I wanted to write it down.

Classification is almost never the hard part. And you almost never need a dedicated step for it.

Here’s what I mean.

Lesson 1: Classification is just a field

Lesson 1: Classification is just a field

When I started, I thought “classify the document” was its own problem – separate node, separate prompt, separate step. It isn’t. If you’re already running a document through the easybits Extractor, adding a document_class field to your pipeline is basically free. Same pass, same latency, same cost. The model is already reading the whole thing – you just tell it what else to return.

Once I internalized that, half the workflows I had planned collapsed into simpler ones.

Lesson 2: Your categories should match your routing, not your taxonomy

Lesson 2: Your categories should match your routing, not your taxonomy

The first classifier I built had 11 document types. It was “accurate” in some abstract sense and useless in practice, because downstream I only had 3 folders to route to. Now I define categories by what happens next. If invoices and receipts go to the same place and get the same treatment, they’re the same category. Classification exists to serve routing – not the other way around.

Lesson 3: Treat “unknown” as a real category

Lesson 3: Treat “unknown” as a real category

The first thing you need to get right, before any prompt tuning can do its job, is giving the model an explicit escape hatch. I tell it to return null when a document doesn’t cleanly fit any category, and I check for that right after classification. An IF node with the “is empty” operator catches real nulls, undefined values, and empty strings in one condition.

This one change – giving the model permission to say “I don’t know” – is what makes everything else work. Without it, better prompts just produce more confident wrong answers. With it, the prompt work from Lesson 5 actually compounds.

Lesson 4: Ask for a confidence score alongside the classification

Lesson 4: Ask for a confidence score alongside the classification

This is the one I’d skip if I were building this a year ago, and it’s the one I’d put first if I were starting over.

Alongside document_class, I define a second field called confidence_score on the same easybits pipeline that returns a decimal between 0.0 and 1.0. The extractor returns both in a single call. Then the validation branch checks for two things: is the class empty, or is the confidence below a threshold (I use 0.5). Either one routes to Slack for manual review. Everything else continues through the pipeline.

The counterintuitive part: a confident null should score high, not low. If the model is certain the document doesn’t fit any category, that’s a confident decision – not an uncertain one. My confidence prompt is explicit about this, and it matters. Otherwise you end up penalising the cases where the model is actually doing the right thing.

Two fields. One extractor call. You get classification & self-reported uncertainty in a single pass, and your error handling becomes trivial.

Lesson 5: Classification prompts should describe evidence, not just label names

Lesson 5: Classification prompts should describe evidence, not just label names

My first classification prompts looked like a list of category names with one-line descriptions. They were fine until they weren’t – the model would latch onto a single keyword and misclassify anything that contained it.

The prompts that actually work describe what evidence looks like for each category. For each class, I list the kinds of issuers, the terminology you’d expect in the line items, the tax structures, the identifiers that show up. A hotel invoice has check-in/check-out dates, room numbers, lodging tax, folio references. A telecom invoice has a billing period, data usage summaries, SIM or account numbers. Multiple weak signals beat one strong keyword every time.

Three rules I put in every classification prompt now:

- Return only the label, nothing else. No explanations, no punctuation.

- Require multiple corroborating signals before assigning a category. A single keyword match is not enough.

- Return

nullif uncertain. Do not guess. Do not pick the closest match.

That last one is the whole game. Models will happily pick a wrong answer if you don’t explicitly tell them it’s okay not to.

The workflow

The workflow

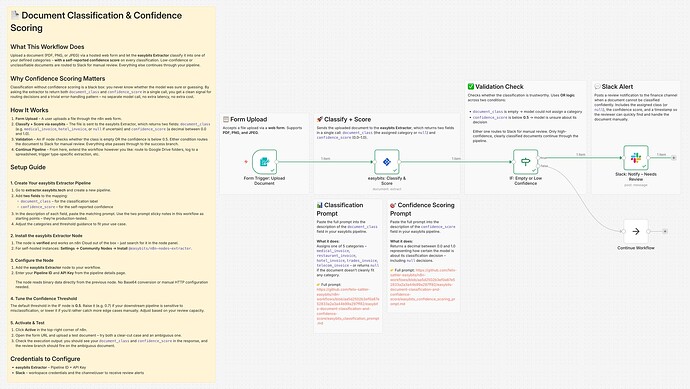

I put together a minimal version that shows the pattern end-to-end: form upload → easybits Extractor returning document_class + confidence_score → IF node that routes empty or low-confidence results to Slack, everything else continues.

It’s meant to be the skeleton you drop your own categories and routes into.

Prefer to grab it from GitHub? The sanitized workflow JSON, the classification prompt, and the confidence scoring prompt all sit in one folder:

Setup

Setup

- Cloud users:

easybits Extractoris available out of the box, just search for it in the node panel - Self-hosted: Settings → Community Nodes → install

@easybits/n8n-nodes-extractor

Free tier is 50 requests/month, enough to test this end-to-end.

For those of you doing classification in n8n – are you running it as a separate step, or folding it into extraction? And if you’re using confidence scoring, where are you setting your threshold? Curious to hear what’s working.

Best,

Felix