I’m using n8n to transfer range value from one spreadsheet to another spreadsheets. But when I running read sheet with large rows, in my case are over 250.000rows then it got this error.

Stack

RangeError: Maximum call stack size exceeded

at Object.router (/usr/local/lib/node_modules/n8n/node_modules/n8n-nodes-base/dist/nodes/Google/Sheet/v2/actions/router.js:67:29)

at runMicrotasks (<anonymous>)

at processTicksAndRejections (node:internal/process/task_queues:96:5)

at async Object.execute (/usr/local/lib/node_modules/n8n/node_modules/n8n-nodes-base/dist/nodes/Google/Sheet/v2/GoogleSheetsV2.node.js:20:16)

at async Workflow.runNode (/usr/local/lib/node_modules/n8n/node_modules/n8n-workflow/dist/Workflow.js:658:28)

at async /usr/local/lib/node_modules/n8n/node_modules/n8n-core/dist/WorkflowExecute.js:585:53

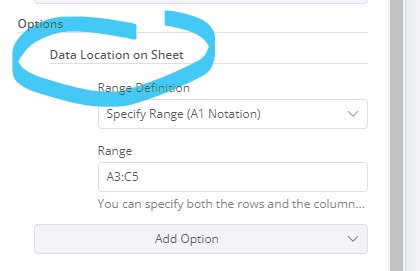

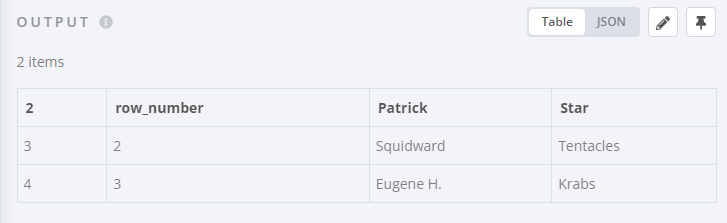

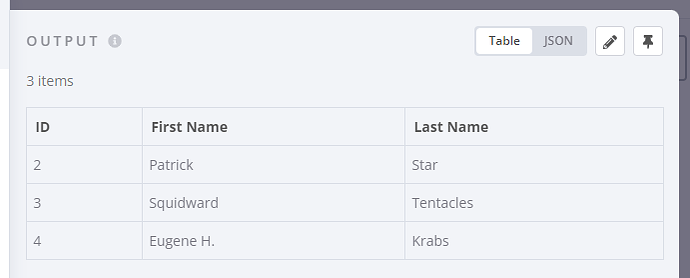

My workflow

Information on my n8n setup

- n8n version: 0.204.0

- Database you’re using (default: SQLite):

- Running n8n with the execution process [own(default), main]:

-

Running n8n via [Docker, npm, n8n.cloud, desktop app]:

-Server: Kamatera, CPU: 1A, RAM: 2GB, SSD: 20GB