This is strange, here is the debug output when executing a workflow manually:

Jul 28 23:41:31 172.18.0.1 - - [28/Jul/2021:21:41:31 +0000] "POST /rest/workflows/run HTTP/1.1" 200 28 "https://test.cloudron.dev/workflow/1" "Mozilla/5.0 (X11; Linux x86_64; rv:91.0) Gecko/20100101 Firefox/91.0"

Jul 28 23:41:31

Jul 28 23:41:31 Loading configuration overwrites from:

Jul 28 23:41:31 - /app/data/.n8n/app-config.json

Jul 28 23:41:31

Jul 28 23:41:31 2021-07-28T21:41:31.691Z | debug | Received child process message of type start for execution ID 3. {"executionId":"3","file":"WorkflowRunner.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.696Z | verbose | Initializing n8n sub-process {"pid":62,"workflowId":"1","file":"WorkflowRunnerProcess.js","function":"runWorkflow"}

Jul 28 23:41:31 2021-07-28T21:41:31.703Z | verbose | Workflow execution started {"workflowId":"1","file":"WorkflowExecute.js","function":"processRunExecutionData"}

Jul 28 23:41:31 2021-07-28T21:41:31.704Z | debug | Received child process message of type processHook for execution ID 3. {"executionId":"3","file":"WorkflowRunner.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.704Z | debug | Start processing node "Cron" {"node":"Cron","workflowId":"1","file":"WorkflowExecute.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.704Z | debug | Executing hook (hookFunctionsPush) {"executionId":"3","sessionId":"woupbridiqe","workflowId":"1","file":"WorkflowExecuteAdditionalData.js","function":"workflowExecuteBefore"}

Jul 28 23:41:31 2021-07-28T21:41:31.705Z | debug | Send data of type "executionStarted" to editor-UI {"dataType":"executionStarted","sessionId":"woupbridiqe","file":"Push.js","function":"send"}

Jul 28 23:41:31 2021-07-28T21:41:31.705Z | debug | Running node "Cron" started {"node":"Cron","workflowId":"1","file":"WorkflowExecute.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.705Z | debug | Received child process message of type processHook for execution ID 3. {"executionId":"3","file":"WorkflowRunner.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.705Z | debug | Executing hook on node "Cron" (hookFunctionsPush) {"executionId":"3","sessionId":"woupbridiqe","workflowId":"1","file":"WorkflowExecuteAdditionalData.js","function":"nodeExecuteBefore"}

Jul 28 23:41:31 2021-07-28T21:41:31.705Z | debug | Send data of type "nodeExecuteBefore" to editor-UI {"dataType":"nodeExecuteBefore","sessionId":"woupbridiqe","file":"Push.js","function":"send"}

Jul 28 23:41:31 2021-07-28T21:41:31.711Z | debug | Running node "Cron" finished successfully {"node":"Cron","workflowId":"1","file":"WorkflowExecute.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.711Z | debug | Received child process message of type processHook for execution ID 3. {"executionId":"3","file":"WorkflowRunner.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.711Z | debug | Executing hook on node "Cron" (hookFunctionsPush) {"executionId":"3","sessionId":"woupbridiqe","workflowId":"1","file":"WorkflowExecuteAdditionalData.js","function":"nodeExecuteAfter"}

Jul 28 23:41:31 2021-07-28T21:41:31.711Z | debug | Start processing node "CoinGecko" {"node":"CoinGecko","workflowId":"1","file":"WorkflowExecute.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.711Z | debug | Send data of type "nodeExecuteAfter" to editor-UI {"dataType":"nodeExecuteAfter","sessionId":"woupbridiqe","file":"Push.js","function":"send"}

Jul 28 23:41:31 2021-07-28T21:41:31.712Z | debug | Received child process message of type processHook for execution ID 3. {"executionId":"3","file":"WorkflowRunner.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.712Z | debug | Running node "CoinGecko" started {"node":"CoinGecko","workflowId":"1","file":"WorkflowExecute.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.712Z | debug | Executing hook on node "CoinGecko" (hookFunctionsPush) {"executionId":"3","sessionId":"woupbridiqe","workflowId":"1","file":"WorkflowExecuteAdditionalData.js","function":"nodeExecuteBefore"}

Jul 28 23:41:31 2021-07-28T21:41:31.712Z | debug | Send data of type "nodeExecuteBefore" to editor-UI {"dataType":"nodeExecuteBefore","sessionId":"woupbridiqe","file":"Push.js","function":"send"}

Jul 28 23:41:31 2021-07-28T21:41:31.802Z | debug | Running node "CoinGecko" finished successfully {"node":"CoinGecko","workflowId":"1","file":"WorkflowExecute.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.803Z | verbose | Workflow execution finished successfully {"workflowId":"1","file":"WorkflowExecute.js","function":"processSuccessExecution"}

Jul 28 23:41:31 2021-07-28T21:41:31.803Z | debug | Received child process message of type processHook for execution ID 3. {"executionId":"3","file":"WorkflowRunner.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.803Z | debug | Executing hook on node "CoinGecko" (hookFunctionsPush) {"executionId":"3","sessionId":"woupbridiqe","workflowId":"1","file":"WorkflowExecuteAdditionalData.js","function":"nodeExecuteAfter"}

Jul 28 23:41:31 2021-07-28T21:41:31.803Z | debug | Send data of type "nodeExecuteAfter" to editor-UI {"dataType":"nodeExecuteAfter","sessionId":"woupbridiqe","file":"Push.js","function":"send"}

Jul 28 23:41:31 2021-07-28T21:41:31.806Z | debug | Received child process message of type processHook for execution ID 3. {"executionId":"3","file":"WorkflowRunner.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.806Z | debug | Executing hook (hookFunctionsSave) {"executionId":"3","workflowId":"1","file":"WorkflowExecuteAdditionalData.js","function":"workflowExecuteAfter"}

Jul 28 23:41:31 2021-07-28T21:41:31.806Z | debug | Save execution data to database for execution ID 3 {"executionId":"3","workflowId":"1","finished":true,"stoppedAt":"2021-07-28T21:41:31.803Z","file":"WorkflowExecuteAdditionalData.js","function":"workflowExecuteAfter"}

Jul 28 23:41:31 2021-07-28T21:41:31.809Z | debug | Received child process message of type end for execution ID 3. {"executionId":"3","file":"WorkflowRunner.js"}

Jul 28 23:41:31 2021-07-28T21:41:31.815Z | debug | Executing hook (hookFunctionsPush) {"executionId":"3","sessionId":"woupbridiqe","workflowId":"1","file":"WorkflowExecuteAdditionalData.js","function":"workflowExecuteAfter"}

Jul 28 23:41:31 2021-07-28T21:41:31.815Z | debug | Save execution progress to database for execution ID 3 {"executionId":"3","workflowId":"1","file":"WorkflowExecuteAdditionalData.js","function":"workflowExecuteAfter"}

Jul 28 23:41:31 2021-07-28T21:41:31.815Z | debug | Send data of type "executionFinished" to editor-UI {"dataType":"executionFinished","sessionId":"woupbridiqe","file":"Push.js","function":"send"}

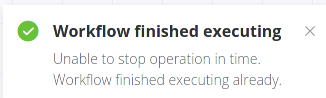

and when you press the Stop button:

With this debug log:

Jul 28 23:42:09 ERROR RESPONSE

Jul 28 23:42:09 Error: The execution id "3" could not be found.

Jul 28 23:42:09 at /usr/local/node-14.17.0/lib/node_modules/n8n/dist/src/Server.js:1270:27

Jul 28 23:42:09 at processTicksAndRejections (internal/process/task_queues.js:95:5)

Jul 28 23:42:09 at async /usr/local/node-14.17.0/lib/node_modules/n8n/dist/src/ResponseHelper.js:86:26

Jul 28 23:42:09 172.18.0.1 - - [28/Jul/2021:21:42:09 +0000] "POST /rest/executions-current/3/stop HTTP/1.1" 500 380 "https://test.cloudron.dev/workflow/1" "Mozilla/5.0 (X11; Linux x86_64; rv:91.0) Gecko/20100101 Firefox/91.0"

Jul 28 23:42:09 172.18.0.1 - - [28/Jul/2021:21:42:09 +0000] "GET /rest/executions/3 HTTP/1.1" 200 4027 "https://test.cloudron.dev/workflow/1" "Mozilla/5.0 (X11; Linux x86_64; rv:91.0) Gecko/20100101 Firefox/91.0"