Hi everyone!

I’m building a chatbot that uses n8n’s native MCP to expose workflows as tools. I’ve run into two challenges and would love to hear how (or if) others have tackled them.

Setup

-

n8n with native MCP Server Trigger

-

Workflows exposed as MCP tools (e.g., “Generate LinkedIn Post Ideas”)

-

Client: ChatGPT (via MCP)

Challenge 1: Long-Running Workflows (60+ seconds)

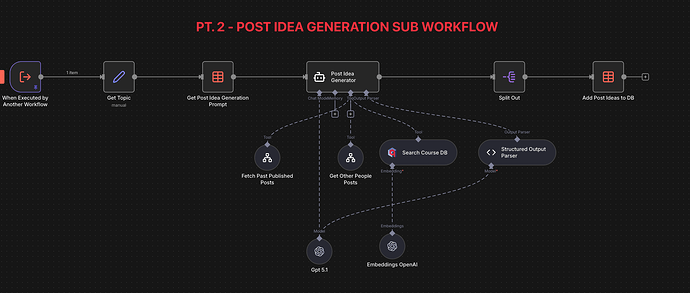

Part 2 of my workflow could take 2-3 minutes to complete (they involve multiple API calls, AI processing, etc.). I’m hitting the 60-second MCP timeout limit.

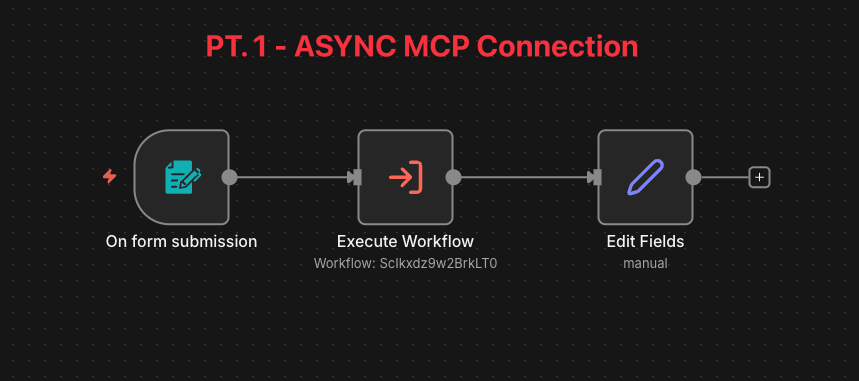

My current workaround: I’m using an async pattern where:

-

The workflow immediately returns a “processing started” message

-

Results are delivered via polling (Another MCP Command that will retrieve the post from the DB)

Question: Is there a cleaner built-in way to handle this?

Challenge 2: AI Client Adds Its Own Content to Tool Results

This is the trickier one. When I ask ChatGPT to “Generate LinkedIn post ideas” (which triggers my n8n workflow), I get back:

-

The actual workflow output (my generated ideas)

The actual workflow output (my generated ideas) -

PLUS ChatGPT’s own generated ideas on top of it

PLUS ChatGPT’s own generated ideas on top of it

The AI seems to interpret the tool call as “user wants LinkedIn ideas” and tries to help by generating more, rather than just relaying what the tool returned.

What I want: The AI should only return the workflow output, not add its own content.

What I’ve tried:

-

Adding an edit node at the end mentioning: do not return anything

-

Adding system prompts like “Only relay tool outputs, do not generate additional content”

Neither fully solved it.

Question: Has anyone found a reliable way to make the AI client (ChatGPT, Claude, etc.) just return the MCP tool output without adding its own interpretation/content?

Is this something that needs to be handled on the n8n side (in how the response is formatted), or is it purely a client-side prompt engineering challenge?