I’ve been waiting for over 8 weeks hoping this issue would be addressed (or at least partially implemented), but so far, no fix or update has been made — perhaps because this limitation hasn’t yet been mapped internally.

When using AI Agents with the newer models that support native reasoning capabilities, it becomes extremely frustrating to work with the n8n AI Agent node. That’s because, in 99% of the compatible models, the reasoning functionality is not exposed through the interface — making it impossible to:

• Enable the model’s reasoning mode,

• Select the reasoning effort level (reasoning_effort = low / medium / high, when available),

• Or define how many tokens the model is allowed to use specifically for reasoning.

This is really limiting. In order to leverage these reasoning features, we are forced to use custom HTTP requests to the provider or to OpenRouter, which means we lose access to all the additional features and convenience provided by the AI Agent node.

It’s important to highlight that some models already partially support this in the n8n node:

• Anthropic Claude 4 Sonnet → Enable thinking > Thinking Budget (tokens)

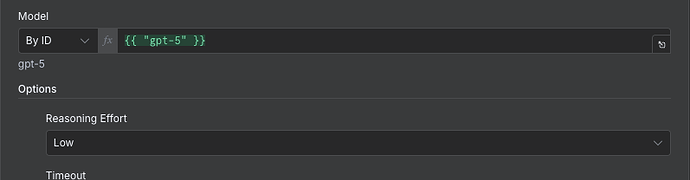

• OpenAI GPT-0.3 → Reasoning Effort: low / medium / high

• OpenAI GPT-4 mini → Reasoning Effort: low / medium / high

However, there are many other models that support reasoning capabilities, but which currently have no option in the AI Agent node to enable reasoning or configure its parameters. Examples include:

• Gemini 2.5 Flash

• Gemini 2.5 Flash Lite

• Gemini 2.5 Pro

• Grok 3 Mini

• Grok 4

• OpenAI GPT-OSS-120B

• GPT-5

• GPT-5 Mini

• GLM 4.5 (via OpenRouter)

• Qwen3 235B A22B Thinking 2507 (via OpenRouter)

• Deepseek R1 0528 (via OpenRouter)

• Perplexity: Sonar Reasoning Pro

…and many others.

Please consider urgently expanding the reasoning configuration options to all compatible models.

Important: This request is specifically about the native reasoning functionality of the models themselves, which is entirely different from the separate “Think Tool” node in n8n.