Github URL: GitHub - SolomonChrist/n8nAICostEstimator: This workflow is designed to be run from within n8n and accessed either via a human on a web browser or via an AI via Webhook call. It will return the estimated cost of your prompt (including with files, ex. PDFs) so you know how many tokens or how much the pricing is BEFORE sending it along to the AI you want. Covers many major AI Platforms. · GitHub

So basically one of my clients was asking if we could understand Tokens BEFORE sending data to the AI model. I looked everywhere and it seemed there are open source alternatives and aslo its all API its not just built into claude (Or at least I couldn’t find it), so then I said, WHY DONT I JUST BUILD ONE?

Using Claude Code I built out a full n8n workflow that works like this:

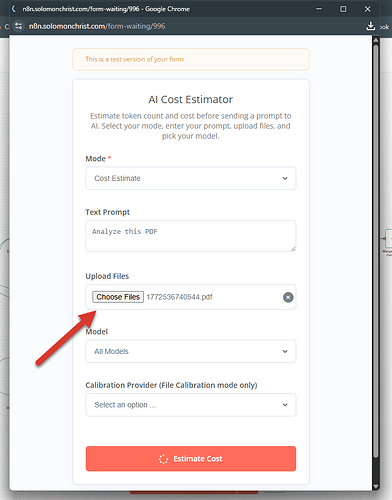

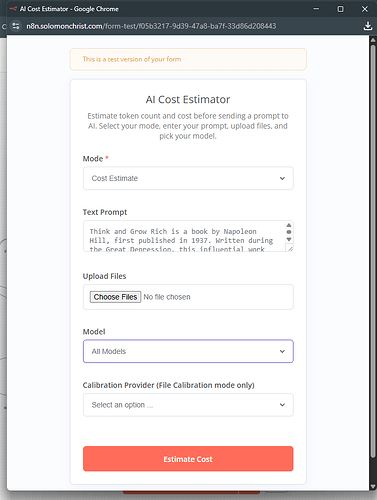

- Shows a form to input your PROMPT and any FILES (PDFs, etc.)

- Choose the models you want to test

- HIT SUBMIT

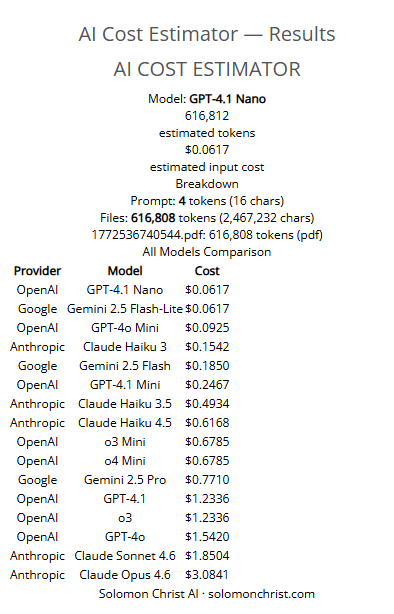

It then gets you a full REPORT Estimating costs for various models AND all the data is within a google sheet so you can calibrate it by grabbing live data OR the API’s but other than that its self contained so you don’t actually use AI credits to figure it out every time (That was the API thing and that can suck tokens). I also made a webhook so you can access it via a Claude CLI as well just by sending a webhook and it will return JSON on the token estimate. Great to put in hooks into Claude Code BEFORE sending anything and thus getting back an estimate of tokens thus SAVING YOU MONEY!

OUTPUT:

Go ahead and get your copy now on Github  Cheers!!

Cheers!!

Solomon Christ

AI + Automation Professional

Side Note: Yes I can throw this up into the n8n Workflows ecosystem, to be honest I just don’t have the time at the moment so if someone from within the n8n team wants to or another community user, feel free to throw it up there, I tried going through the whole signing up for account etc. etc.

So if someone can throw it up there too, that would be awesome, otherwise just grab it from the Github then

Solomon Christ

Really cool idea — pre-flight token estimation before hitting the API is something I’ve been wanting to set up for a while.

Quick question: what tokenizer are you using under the hood? Claude uses its own tokenizer (not tiktoken), and the token count can differ meaningfully from GPT-4 for the same prompt — especially with code or structured JSON. Are you pulling from Anthropic’s /v1/tokenize endpoint, or approximating based on character/word count?

Also curious how you handle file inputs in the estimate. PDFs and images get converted before they reach the model, so the actual token count depends on how the extraction/chunking is done upstream. Do you estimate based on raw file size, or are you extracting text first and then counting tokens from that?

The Claude Code webhook hook integration is a nice touch by the way — that’s a practical way to keep costs predictable in automated pipelines.

1 Like

Hi @Benjamin_Behrens

Yeah so for now I just kept it really simple, the pricing was literally Pre-pulled data from websites based on what they list as the prices - So its super fast and sits in spreadsheets ready to go

It’s been setup with options to calibrate it in the future by doing direct calls to the v1/token endpoints however I kept clear of that as the goal was not to hit those points unless needed. Hence why I left it open for the community to take it over and start adding in additional ideas like getting the endpoints for more accurate estimates and then even scraping the web for even more.

For files it breaks things down into text ultimately so even with PDFs it gives that estimate.

The goal here: A ballpark idea of what it could be - so that if anyone is about to run something massive, they can have some form or human-in-the-loop opporunities. Especially with Agentic AI agents just going off and doing whatever and we just pay the bill and don’t even realize how many tokens sometimes agentic agents are using up lol.

Feel free to grab a copy and make it your own and adjust it around

The current version can be used both via form and via webhook - It’s pre-defined costs so that its super fast and doesnt require hitting any endpoints or anything as the first data was scraped from the web and put into spreadsheets.

You will need a Google Sheets OAUTH connection but then you’re good to go

Solomon

Thanks for the detailed explanation! Makes total sense — if the goal is a budget guardrail before a big run, pre-pulled pricing data from the spreadsheet is more than sufficient. No need to hit tokenize endpoints when an approximation gets you 90% of the value at zero cost.

The human-in-the-loop angle is spot on. Agentic pipelines especially can spiral fast without any checkpoints — a quick “this run might cost ~$X” prompt before execution is genuinely useful.

Might fork this at some point and wire in tighter per-model tokenization for structured JSON / code-heavy prompts. Will share back if I do. Thanks for open-sourcing it!

1 Like

![]() Cheers!!

Cheers!!