Hi

I have a problem with a flow where i need to pass a PDF to chatgpt to read as part of a prompt.

This is following the description here: https://platform.openai.com/docs/guides/pdf-files?api-mode=responses

I have tested this with some local python and noted that this is the right approach for me to get it to handle the file correctly.

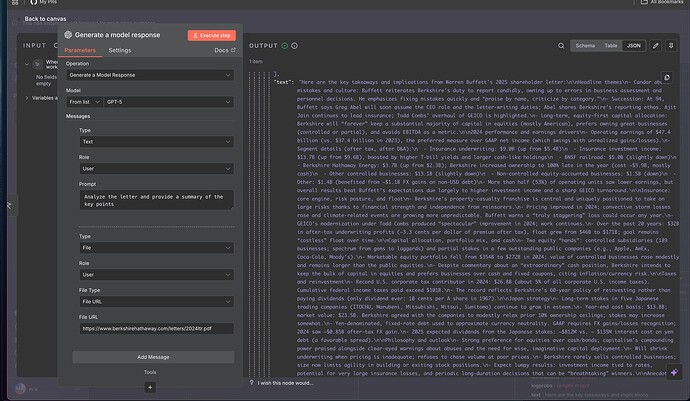

However it seems that the openAI nodes for n8n does not support the input_file method for openAI. it supports input_image but input_image does not accept PDF files (i also tested that)

Most examples for this is using the text extract node to convert the PDF to text and then pass the text to the AI model. But this is not what i am looking for as i yields the wrong results. The PDFs i am working with have funny structures and i need them to be passed through the “vision model” where the AI considers not only the text but the placement on the page to get the right results (i also tested that)

Does anyone has smart ideas?

I am aware that i can send it manually using a HTTP call node. I have done that with openAI in another case. The problem is that then I also need to parse the openAI output manually, which is possible but it does make for some rather crazy code nodes to do so (of course those are AI coded, but they still makes anyone looking at the workflow get a funny face)