Describe the problem/error/question

When using Python in a Code node, will cause an error.

What is the error message (if any)?

Problem in node ‘Code in Python (Beta)‘

RuntimeError: Blocked for security reasons

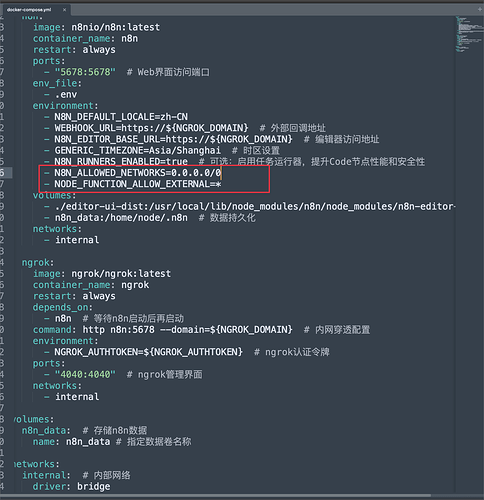

I’ve added - N8N_ALLOWED_NETWORKS=0.0.0.0/0

- NODE_FUNCTION_ALLOW_EXTERNAL=* to my Docker Compose configuration.

I’ve also shut down and restarted Docker Compose.

But the issue persists.

Node type

n8n-nodes-base.code

Node version

2 (Latest)

n8n version

1.114.4 (Self Hosted)

Stack trace

Error: RuntimeError: Blocked for security reasons at PythonSandbox.getPrettyError (/usr/local/lib/node_modules/n8n/node_modules/.pnpm/n8n-nodes-base@file+packages+nodes-base_@[email protected]_asn1.js@5_afd197edb2c1f848eae21a96a97fab23/node_modules/n8n-nodes-base/nodes/Code/PythonSandbox.ts:101:11) at PythonSandbox.runCodeInPython (/usr/local/lib/node_modules/n8n/node_modules/.pnpm/n8n-nodes-base@file+packages+nodes-base_@[email protected]_asn1.js@5_afd197edb2c1f848eae21a96a97fab23/node_modules/n8n-nodes-base/nodes/Code/PythonSandbox.ts:85:15) at PythonSandbox.runCodeAllItems (/usr/local/lib/node_modules/n8n/node_modules/.pnpm/n8n-nodes-base@file+packages+nodes-base_@[email protected]_asn1.js@5_afd197edb2c1f848eae21a96a97fab23/node_modules/n8n-nodes-base/nodes/Code/PythonSandbox.ts:46:27) at ExecuteContext.execute (/usr/local/lib/node_modules/n8n/node_modules/.pnpm/n8n-nodes-base@file+packages+nodes-base_@[email protected]_asn1.js@5_afd197edb2c1f848eae21a96a97fab23/node_modules/n8n-nodes-base/nodes/Code/Code.node.ts:198:14) at WorkflowExecute.executeNode (/usr/local/lib/node_modules/n8n/node_modules/.pnpm/n8n-core@file+packages+core_@[email protected]_@[email protected]_08b575bec2313d5d8a4cc75358971443/node_modules/n8n-core/src/execution-engine/workflow-execute.ts:1091:8) at WorkflowExecute.runNode (/usr/local/lib/node_modules/n8n/node_modules/.pnpm/n8n-core@file+packages+core_@[email protected]_@[email protected]_08b575bec2313d5d8a4cc75358971443/node_modules/n8n-core/src/execution-engine/workflow-execute.ts:1272:11) at /usr/local/lib/node_modules/n8n/node_modules/.pnpm/n8n-core@file+packages+core_@[email protected]_@[email protected]_08b575bec2313d5d8a4cc75358971443/node_modules/n8n-core/src/execution-engine/workflow-execute.ts:1673:27 at /usr/local/lib/node_modules/n8n/node_modules/.pnpm/n8n-core@file+packages+core_@[email protected]_@[email protected]_08b575bec2313d5d8a4cc75358971443/node_modules/n8n-core/src/execution-engine/workflow-execute.ts:2287:11

Please share your workflow

(Select the nodes on your canvas and use the keyboard shortcuts CMD+C/CTRL+C and CMD+V/CTRL+V to copy and paste the workflow.){

"nodes": [

{

"parameters": {},

"type": "n8n-nodes-base.manualTrigger",

"typeVersion": 1,

"position": [

0,

0

],

"id": "21617019-a62f-4f07-8dfb-aae30432e8d5",

"name": "When clicking ‘Execute workflow’"

},

{

"parameters": {

"url": "https://news.ycombinator.com/",

"options": {}

},

"type": "n8n-nodes-base.httpRequest",

"typeVersion": 4.2,

"position": [

224,

0

],

"id": "484be7b7-d24d-4223-9cfa-2c86ef591349",

"name": "HTTP Request"

},

{

"parameters": {

"operation": "extractHtmlContent",

"extractionValues": {

"values": [

{

"key": "序号",

"cssSelector": "tr .athing .rank",

"returnArray": true

},

{

"key": "文章标题",

"cssSelector": "tr .titleline >a",

"returnArray": true

},

{

"key": "项目ID待处理",

"cssSelector": "tr .subtext .age",

"returnArray": true

},

{

"key": "发布时间UTC待处理",

"cssSelector": "tr .subtext .age",

"returnValue": "attribute",

"attribute": "title",

"returnArray": true

},

{

"key": "打分",

"cssSelector": "tr .subtext .score",

"returnArray": true

},

{

"key": "评论数",

"cssSelector": ".subline > a:nth-last-of-type(1)",

"returnArray": true

},

{

"key": "文章链接",

"cssSelector": "tr .titleline >a",

"returnValue": "attribute",

"attribute": "href",

"returnArray": true

}

]

},

"options": {}

},

"type": "n8n-nodes-base.html",

"typeVersion": 1.2,

"position": [

432,

0

],

"id": "506bf0f5-a58d-4521-8a6c-65d4e095ad57",

"name": "HTML"

},

{

"parameters": {

"language": "python",

"pythonCode": "# 准备一个空列表,用于存放我们处理后的新项目\nprocessed_items = []\n\n# --- 遍历所有输入的项目 ---\n# 这个循环会为你的每一个 item (每一行数据) 都执行一次\nfor item in _input.all():\n # 从当前 item 的 json 数据中安全地获取 '评论链接'\n comment_link = item.json.get('评论链接')\n \n # 只有当链接存在时,我们才为它创建一个新项目\n if comment_link:\n # --- 构建一个新的、只包含 '评论链接' 字段的项目 ---\n new_item = {\n 'json': {\n '评论链接': comment_link\n }\n }\n \n # 将这个新创建的、干净的项目添加到我们的结果列表中\n processed_items.append(new_item)\n\n# 返回由多个独立项目组成的新列表\nreturn processed_items"

},

"type": "n8n-nodes-base.code",

"typeVersion": 2,

"position": [

800,

192

],

"id": "1233e598-db7e-4adb-bf51-d213afde50e2",

"name": "保留评论链接"

},

{

"parameters": {

"language": "python",

"pythonCode": "from datetime import datetime, timedelta\nimport re\n\n# 你的输入只有一个 item,所以这个循环只会执行一次\nfor item in _input.all():\n json_data = item.json\n\n # --- 1. 处理 '项目ID待处理' 列表 ---\n project_ids_raw_list = json_data.get('项目ID待处理', [])\n processed_links = [] # 创建一个新列表来存放处理后的结果\n \n # 内部循环,遍历列表中的每一个链接字符串\n for link_str in project_ids_raw_list:\n try:\n extracted_id = link_str.split('[')[1].split(']')[0]\n full_link = f\"https://news.ycombinator.com/{extracted_id}\"\n processed_links.append(full_link)\n except (IndexError, AttributeError):\n processed_links.append('格式错误')\n \n # 用处理好的新列表替换旧字段,并重命名\n json_data['评论链接'] = processed_links\n if '项目ID待处理' in json_data:\n del json_data['项目ID待处理']\n\n # --- 2. 处理 '发布时间UTC待处理' 列表 ---\n publish_times_raw_list = json_data.get('发布时间UTC待处理', [])\n processed_times = []\n \n for time_str in publish_times_raw_list:\n try:\n time_part = time_str.split(' ')[0]\n utc_time = datetime.strptime(time_part, '%Y-%m-%dT%H:%M:%S')\n beijing_time = utc_time + timedelta(hours=8)\n formatted_time = beijing_time.strftime('%Y-%m-%d %H:%M:%S')\n processed_times.append(formatted_time)\n except (ValueError, IndexError, AttributeError):\n processed_times.append('时间格式错误')\n\n json_data['北京发布时间'] = processed_times\n if '发布时间UTC待处理' in json_data:\n del json_data['发布时间UTC待处理']\n \n # --- 3. 处理 '打分' 列表 ---\n scores_raw_list = json_data.get('打分', [])\n processed_scores = []\n \n for score_str in scores_raw_list:\n try:\n # 确保 score_str 是字符串,以防列表中有 None 或其他类型\n score_value = int(str(score_str).replace('points', '').strip())\n processed_scores.append(score_value)\n except (ValueError, AttributeError):\n processed_scores.append(0) # 如果转换失败,给一个默认值 0\n\n json_data['打分'] = processed_scores\n \n # --- 4. 处理 '评论数' 列表 ---\n comments_raw_list = json_data.get('评论数', [])\n processed_comments = []\n \n for comment_str in comments_raw_list:\n match = re.search(r'\\d+', str(comment_str))\n if match:\n processed_comments.append(int(match.group(0)))\n else:\n processed_comments.append(0)\n\n json_data['评论数'] = processed_comments\n\n# 返回被修改后的项目 (仍然只有一个 item)\nreturn _input.all()"

},

"type": "n8n-nodes-base.code",

"typeVersion": 2,

"position": [

640,

0

],

"id": "90479bc4-485d-4afb-8e1b-69954d2daa38",

"name": "网页内容"

},

{

"parameters": {

"language": "python",

"pythonCode": "import requests\n# 导入所需的库\nimport requests\nfrom bs4 import BeautifulSoup\n\n# --- 定义一个可复用的爬虫函数 ---\ndef scrape_web_content(url):\n \"\"\"\n 接收一个 URL,爬取其网页内容,并返回HTML文本。\n 包含基本的错误处理和超时设置。\n \"\"\"\n # 检查URL是否有效\n if not url or not isinstance(url, str) or not url.startswith('http'):\n return \"无效或空的 URL\"\n\n headers = {\n 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36'\n }\n\n try:\n # 发送 HTTP GET 请求\n response = requests.get(url, headers=headers, timeout=10)\n response.raise_for_status() # 如果请求失败 (如 404, 503), 则抛出异常\n \n # 直接返回响应的文本内容 (HTML)\n return response.text\n\n except requests.exceptions.RequestException as e:\n return f\"爬取失败: {e}\"\n except Exception as e:\n return f\"处理时发生未知错误: {e}\"\n\n# --- 主逻辑:遍历 n8n 的输入项目 ---\n# 这个循环会为你的每一个 item (每一行数据) 都执行一次\nfor item in _input.all():\n json_data = item.json\n\n # 1. 从当前 item 中获取 '评论链接'\n comment_url = json_data.get('评论链接')\n \n # 2. 调用爬虫函数抓取内容\n comment_content = scrape_web_content(comment_url)\n \n # 3. 将爬取到的内容添加到当前 item 的 json 数据中,创建新字段 '评论内容'\n item.json['评论内容'] = comment_content\n\n# 返回被修改后的所有项目\nreturn _input.all()"

},

"type": "n8n-nodes-base.code",

"typeVersion": 2,

"position": [

1008,

192

],

"id": "868d0b9a-7184-4085-8e02-961a4f2efd5d",

"name": "Code in Python (Beta)"

}

],

"connections": {

"When clicking ‘Execute workflow’": {

"main": [

[

{

"node": "HTTP Request",

"type": "main",

"index": 0

}

]

]

},

"HTTP Request": {

"main": [

[

{

"node": "HTML",

"type": "main",

"index": 0

}

]

]

},

"HTML": {

"main": [

[

{

"node": "网页内容",

"type": "main",

"index": 0

}

]

]

},

"保留评论链接": {

"main": [

[

{

"node": "Code in Python (Beta)",

"type": "main",

"index": 0

}

]

]

},

"网页内容": {

"main": [

[

{

"node": "保留评论链接",

"type": "main",

"index": 0

}

]

]

}

},

"pinData": {

"网页内容": [

{

"序号": [

"1.",

"2.",

"3.",

"4.",

"5.",

"6.",

"7.",

"8.",

"9.",

"10.",

"11.",

"12.",

"13.",

"14.",

"15.",

"16.",

"17.",

"18.",

"19.",

"20.",

"21.",

"22.",

"23.",

"24.",

"25.",

"26.",

"27.",

"28.",

"29.",

"30."

],

"文章标题": [

"Meta Superintelligence's surprising first paper",

"Pipelining in psql (PostgreSQL 18)",

"The Flummoxagon",

"Show HN: Rift – A tiling window manager for macOS",

"Show HN: Sober not Sorry – free iOS tracker to help you quit bad habits",

"I/O Multiplexing (select vs. poll vs. epoll/kqueue)",

"4x faster LLM inference (Flash Attention guy's company)",

"Coral Protocol: Open infrastructure connecting the internet of agents",

"Ask HN: Abandoned/dead projects you think died before their time and why?",

"Anthropic's Prompt Engineering Tutorial",

"Vancouver Stock Exchange: Scam capital of the world (1989) [pdf]",

"The World's 2.75B Buildings",

"Show HN: A Lisp Interpreter for Shell Scripting",

"Paper2Video: Automatic Video Generation from Scientific Papers",

"A Guide for WireGuard VPN Setup with Pi-Hole Adblock and Unbound DNS",

"Microsoft only lets you opt out of AI photo scanning 3x a year",

"CamoLeak: Critical GitHub Copilot Vulnerability Leaks Private Source Code",

"Testing two 18 TB white label SATA hard drives from datablocks.dev",

"LineageOS 23",

"Windows Subsystem for FreeBSD",

"Show HN: I made an esoteric programming language that's read like a spellbook",

"Superpowers: How I'm using coding agents in October 2025",

"Google blocks Android hack that let Pixel users enable VoLTE anywhere",

"The <output> Tag",

"The World Trade Center under construction through photos, 1966-1979",

"How Apple designs a virtual knob (2012)",

"Floating Electrons on a Sea of Helium",

"Vibing a non-trivial Ghostty feature",

"Beyond indexes: How open table formats optimize query performance",

"Spyware maker NSO Group confirms acquisition by US investors"

],

"打分": [

301,

90,

39,

144,

29,

56,

7,

26,

135,

167,

94,

61,

59,

53,

88,

629,

44,

181,

240,

261,

16,

361,

164,

768,

217,

143,

8,

270,

64,

108

],

"评论数": [

160,

13,

5,

65,

23,

12,

0,

4,

414,

18,

41,

23,

8,

12,

10,

217,

9,

108,

93,

107,

1,

191,

58,

171,

105,

91,

0,

125,

3,

72

],

"文章链接": [

"https://paddedinputs.substack.com/p/meta-superintelligences-surprising",

"https://postgresql.verite.pro/blog/2025/10/01/psql-pipeline.html",

"https://n-e-r-v-o-u-s.com/blog/?p=9827",

"https://github.com/acsandmann/rift",

"https://sobernotsorry.app/",

"https://nima101.github.io/io_multiplexing",

"https://www.together.ai/blog/adaptive-learning-speculator-system-atlas",

"https://arxiv.org/abs/2505.00749",

"item?id=45553132",

"https://github.com/anthropics/prompt-eng-interactive-tutorial",

"https://scamcouver.wordpress.com/wp-content/uploads/2012/04/scam-capital.pdf",

"https://tech.marksblogg.com/building-footprints-gba.html",

"https://github.com/gue-ni/redstart",

"https://arxiv.org/abs/2510.05096",

"https://psyonik.tech/posts/a-guide-for-wireguard-vpn-setup-with-pi-hole-adblock-and-unbound-dns/",

"https://hardware.slashdot.org/story/25/10/11/0238213/microsofts-onedrive-begins-testing-face-recognizing-ai-for-photos-for-some-preview-users",

"https://www.legitsecurity.com/blog/camoleak-critical-github-copilot-vulnerability-leaks-private-source-code",

"https://ounapuu.ee/posts/2025/10/06/datablocks-white-label-drives/",

"https://lineageos.org/Changelog-30/",

"https://github.com/BalajeS/WSL-For-FreeBSD",

"https://github.com/sirbread/spellscript",

"https://blog.fsck.com/2025/10/09/superpowers/",

"https://www.androidauthority.com/pixel-ims-broken-october-update-3606444/",

"https://denodell.com/blog/html-best-kept-secret-output-tag",

"https://rarehistoricalphotos.com/twin-towers-construction-photographs/",

"https://jherrm.github.io/knobs/",

"https://arstechnica.com/science/2025/10/new-qubit-tech-traps-single-electrons-on-liquid-helium/",

"https://mitchellh.com/writing/non-trivial-vibing",

"https://jack-vanlightly.com/blog/2025/10/8/beyond-indexes-how-open-table-formats-optimize-query-performance",

"https://techcrunch.com/2025/10/10/spyware-maker-nso-group-confirms-acquisition-by-us-investors/"

],

"评论链接": [

"https://news.ycombinator.com/item?id=45553577",

"https://news.ycombinator.com/item?id=45555308",

"https://news.ycombinator.com/item?id=45504540",

"https://news.ycombinator.com/item?id=45553995",

"https://news.ycombinator.com/item?id=45555873",

"https://news.ycombinator.com/item?id=45523370",

"https://news.ycombinator.com/item?id=45556474",

"https://news.ycombinator.com/item?id=45555012",

"https://news.ycombinator.com/item?id=45553132",

"https://news.ycombinator.com/item?id=45551260",

"https://news.ycombinator.com/item?id=45553783",

"https://news.ycombinator.com/item?id=45506055",

"https://news.ycombinator.com/item?id=45520585",

"https://news.ycombinator.com/item?id=45553701",

"https://news.ycombinator.com/item?id=45552049",

"https://news.ycombinator.com/item?id=45551504",

"https://news.ycombinator.com/item?id=45553422",

"https://news.ycombinator.com/item?id=45489497",

"https://news.ycombinator.com/item?id=45553835",

"https://news.ycombinator.com/item?id=45547359",

"https://news.ycombinator.com/item?id=45555523",

"https://news.ycombinator.com/item?id=45547344",

"https://news.ycombinator.com/item?id=45553764",

"https://news.ycombinator.com/item?id=45547566",

"https://news.ycombinator.com/item?id=45498055",

"https://news.ycombinator.com/item?id=45506748",

"https://news.ycombinator.com/item?id=45515842",

"https://news.ycombinator.com/item?id=45549434",

"https://news.ycombinator.com/item?id=45516964",

"https://news.ycombinator.com/item?id=45555570"

],

"北京发布时间": [

"2025-10-12 07:16:05",

"2025-10-12 12:46:57",

"2025-10-07 23:40:09",

"2025-10-12 08:22:15",

"2025-10-12 14:46:58",

"2025-10-09 12:06:43",

"2025-10-12 16:37:01",

"2025-10-12 11:41:29",

"2025-10-12 06:16:18",

"2025-10-12 02:06:40",

"2025-10-12 07:43:42",

"2025-10-08 01:26:20",

"2025-10-09 04:58:37",

"2025-10-12 07:32:25",

"2025-10-12 03:41:27",

"2025-10-12 02:36:51",

"2025-10-12 06:58:30",

"2025-10-06 17:36:08",

"2025-10-12 07:53:17",

"2025-10-11 15:32:08",

"2025-10-12 13:31:00",

"2025-10-11 15:29:23",

"2025-10-12 07:41:00",

"2025-10-11 16:27:26",

"2025-10-07 08:40:26",

"2025-10-08 02:19:37",

"2025-10-08 21:18:25",

"2025-10-11 22:31:15",

"2025-10-08 23:01:06",

"2025-10-12 13:39:22"

]

}

]

},

"meta": {

"instanceId": "4c3a751e7d3935ecf344bab5378e53107b88fe3e154ce293c458a770a6ca1bdd"

}

}

Share the output returned by the last node

Information on your n8n setup

- **n8n version:**1.114.4

- **Database (default: SQLite):**sqlite

- n8n EXECUTIONS_PROCESS setting (default: own, main):own

- Running n8n via (Docker, npm, n8n cloud, desktop app):Docker

- Operating system:Mac OS Sequoia 15.6.1