I have a webhook that receives data many times separated by milliseconds and I need to process only the first one, since I get duplicate data. When receiving the data, it is written in a doc to ask if it is the same, but the request arrives so quickly that it happens twice the same

Welcome to the community!

You can use something like RabbitMQ to queue all incoming requests.

In a processor workflow you need to make sure to set it to only do 1 at a time. This would make sure you can check and discard other requests.

What worked for me in a similar situation was adding a “WAIT” (2 seconds or 5 seconds) node in the workflow - that gives times to all the fast webhooks to arrive. You write the data to a sheet anyway - it gives you time to check if a duplicate and then ignore the duplicates.

Thank you very much for the answer, making a queue sounds good since the ideal would be to finish the task of the flow and then move on to the next one since as the data arrives very quickly I do not go up to write in excel to ask.

I will do what is recommended and I will tell you

This was the first thing I did, but since the data arrives almost at the same time, I cannot write in excel when the other data passes and the duplication is generated

Anyway, thank you very much for your answer.

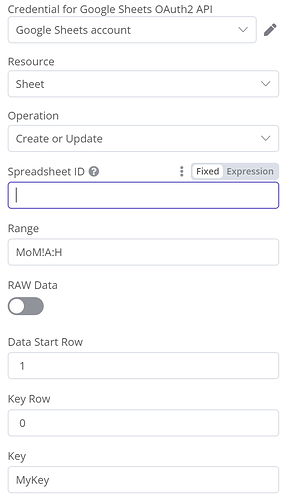

In that case, I would update the sheet like an Upsert operation using a key - whether that is a timestamp or a combination/hash of a few fields that form the key and can detect the duplicate.

OR

you can write another workflow that cleans up dups after the fact.

It will depend on the content of webhook payload.

Hope that gives you some ideas if you havent thought about them already !

I see you have a checkout_charge_id - that may be unique or in combination with the email could be unique or if you get a ton of checkouts from the same email - you can add the HH:MM and maybe not the SS of the time of order to a column in the sheet as the Key and then update / insert into the sheet as opposed to Append.

I have done something like that and it should work

It is a good idea to unify the data, but the main problem is that after receiving the data I send it through another webhook, so I still have duplicates when adding the data to excel since the difference between requests is milliseconds.

from what I checked it is necessary to install RabbitMQ and I am using the web version of n8n and it is not an option to install extra, so it is not a solution that I can execute

There is online hosted rabbits as well ![]()