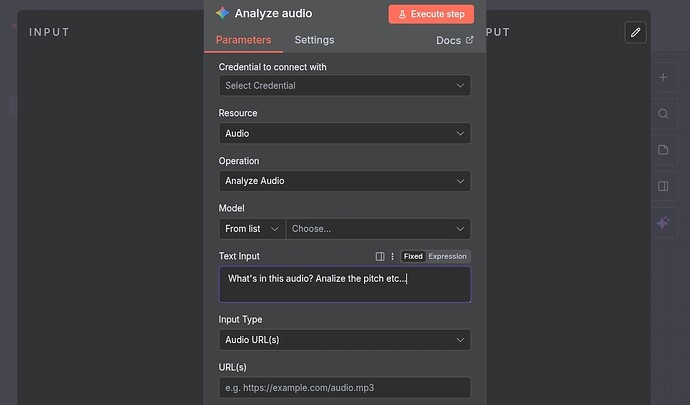

I’m trying to set up a flow that catches audio files of voice actors from emails/forms/whatever and sends them to GPT for voice analysis.

I don’t want to transcribe the text, I want GPT to tell me how the voice sounds in terms of tone, timbre, gender, pitch, etc.

Now it’s been a pain so far because it seems every audio related node or flow I try is about audio. transcription or generation, but not to simply listen to the audio. I have accomplished this in ChatGPT manually and it works, now I want to automate it in n8n.

I’ve tried various OpenAI methods, like message a model, upload a file, etc. but it seems mp3 is not an accepted file format (or any other audio format) or, depending on which model I chose, it tells me “The requested model ‘gpt-4o-audio-preview’ is not supported with the Responses API.”

Does anyone know a way to submit audio files to any GPT model for this purpose?

Please share your workflow

Share the output returned by the last node

Bad request - please check your parameters

Invalid input: Expected context stuffing file type to be a supported format: .art, .bat, .brf, .c, .cls, .css, .diff, .eml, .es, .h, .hs, .htm, .html, .ics, .ifb, .java, .js, .json, .ksh, .ltx, .mail, .markdown, .md, .mht, .mhtml, .mjs, .nws, .patch, .pdf, .pl, .pm, .pot, .py, .scala, .sh, .shtml, .srt, .sty, .tex, .text, .txt, .vcf, .vtt, .xml, .yaml, .yml but got .mp3.

Information on your n8n setup

- n8n version: self-hosted

- Database (default: SQLite): default

- n8n EXECUTIONS_PROCESS setting (default: own, main):

- Running n8n via (Docker, npm, n8n cloud, desktop app): npm

- Operating system: macOS