The AI agent seems to lose memory suddenly from time to time, then if I ping it several more times, asking it to think harder, then it will sometimes suddenly remember what was talked about before and continue the conversation.

Describe the problem/error/question

For example I have a prompt with the following procedures:

- Ask “Please enter the entry to look for.” The format is always in xx/yy. Examples are 1/2, 3/4.

- Check if input is in the format of xx/yy. If not, then say “Format is incorrect.” and go back to step 1. Else, store 1 = xx and 2 = yy. Say “Acknowledged 1/2 is received.”. Proceed to step 3.

- Use the “file lookup” tool to see if 1/2 is in the file. If not, say “I cannot find the information.” Go back to step 1. Else return the information from the file, which includes some further mandatory information user needs to provide.

- Validate user input. If all mandatory information collected, then proceed to another tool to create the entry.

The problem now is that, the first time I build the workflow, it would work perfectly. But afterwards, basically 9 out of 10 times, the simple memory node would suddenly jumps short and forgets everything discussed.

For example:

Human: hi

AI: Please enter the entry to look for.

Human: 123

AI: Format is incorrect. Please enter the entry to look for.

Human: 1/2

AI: Acknowledged 1 is xx and 2 is yy. We found the following mandatory information. Please fill in:

Address:

Contact Number:

Birthday:

Human: Address is 135 road, London. Contact Number: 0112345. Birthday is Jan 1, 1970.

AI: Format is incorrect. Please enter the entry to look for. ←- Here is the problem: AI would suddenly lose all memory previously and go back to step 1. I can check in the simple memory node that the chat history is gone. But sometimes if I keep inputting “you think again?” “think harder” etc., then those past conversations in the session will pop-up again and the agent proceeds normally. As to when it lost memory it varies every time. But it must lose memory somehow after the first time I test the flow.

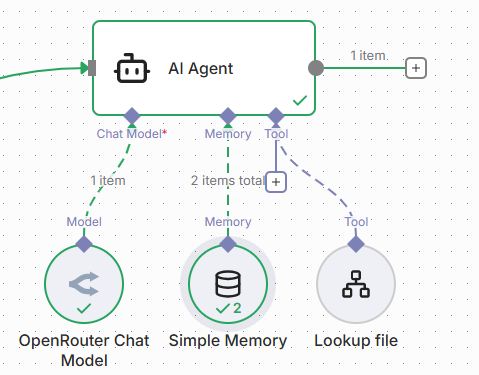

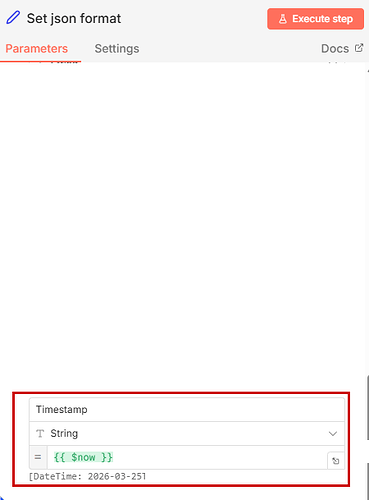

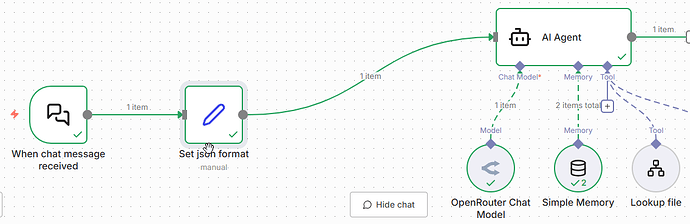

I looked up that there could be a cache issue particularly serious with the memory node that N8N will skip the memory node if it detects that there is no change made. I am not sure how to make sure the memory node MUST be called every time, so that memory won’t be lost within the same session.

The simply memory node context window length is set at 6000 already. I think it’s high enough.

Thanks!