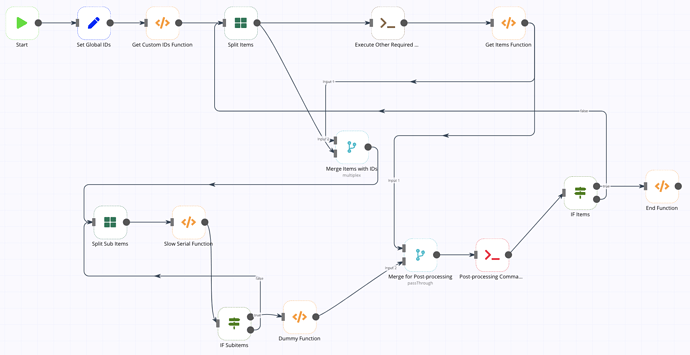

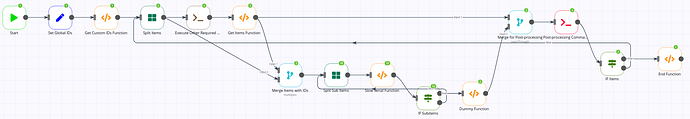

Hi - I have been using the SplitInBatches node successfully to model a process that cannot be run in parallel (see more details here.)

My question is whether it is possible for a SplitInBatches node to receive data multiple times during the execution of the workflow.

I have what could be described as two nested split in batches, where the outer one receives items and generates sub-items, which are processed by the inner split in batches node. The issue I am facing is that the workflow execution stops once it reaches the inner split the second time (code below.)

One alternative I have considered is defining the processing of a single item (with it’s sub-items) as a single workflow. Then have another one that calls the previous one, but I think I would need a way to define some parameters to flag which item to process.

{

"name": "Merge Two Split In Batches Demo",

"nodes": [

{

"parameters": {},

"name": "Start",

"type": "n8n-nodes-base.start",

"typeVersion": 1,

"position": [

-90,

300

]

},

{

"parameters": {

"values": {

"string": [

{

"name": "globalId1",

"value": "g1"

},

{

"name": "globalId2",

"value": "g2"

}

]

},

"options": {}

},

"name": "Set Global IDs",

"type": "n8n-nodes-base.set",

"typeVersion": 1,

"position": [

130,

300

]

},

{

"parameters": {

"command": "echo \"discard this\""

},

"name": "Execute Other Required Command",

"type": "n8n-nodes-base.executeCommand",

"typeVersion": 1,

"position": [

1030,

300

]

},

{

"parameters": {

"functionCode": "return [\n {\n json: {\n itemId: \"id100\",\n },\n },\n {\n json: {\n itemId: \"id101\",\n },\n },\n {\n json: {\n itemId: \"id102\",\n },\n },\n {\n json: {\n itemId: \"id103\",\n },\n },\n {\n json: {\n itemId: \"id104\",\n },\n },\n];"

},

"name": "Get Items Function",

"type": "n8n-nodes-base.function",

"typeVersion": 1,

"position": [

1400,

300

]

},

{

"parameters": {

"mode": "multiplex"

},

"name": "Merge Items with IDs",

"type": "n8n-nodes-base.merge",

"typeVersion": 1,

"position": [

920,

680

]

},

{

"parameters": {

"mode": "passThrough"

},

"name": "Merge for Post-processing",

"type": "n8n-nodes-base.merge",

"typeVersion": 1,

"position": [

1500,

860

]

},

{

"parameters": {

"executeOnce": false,

"command": "=echo \"Processed {{$node[\"Merge for Post-processing\"].json[\"itemId\"]}}\""

},

"name": "Post-processing Command",

"type": "n8n-nodes-base.executeCommand",

"typeVersion": 1,

"position": [

1810,

860

],

"color": "#F90538"

},

{

"parameters": {

"functionCode": "const waitInSeconds = 1;\nitems[0].json.completed = true;\n\nreturn new Promise((resolve) => {\n // Simulate long runnig process\n setTimeout(() => {\n resolve(items);\n }, waitInSeconds * 1000) \n});\n"

},

"name": "Slow Serial Function",

"type": "n8n-nodes-base.function",

"typeVersion": 1,

"position": [

650,

910

]

},

{

"parameters": {

"functionCode": "return [];"

},

"name": "Dummy Function",

"type": "n8n-nodes-base.function",

"typeVersion": 1,

"position": [

1200,

1040

]

},

{

"parameters": {

"functionCode": "const newItems = [\n {\n customId1: \"c1\",\n customId2: \"c2\",\n },\n {\n customId1: \"c3\",\n customId2: \"c4\",\n },\n];\n\nconst existingData = items[0].json;\nreturn newItems.map(newItem => {\n return {\n json: {...newItem, ...existingData},\n };\n});\n"

},

"name": "Get Custom IDs Function",

"type": "n8n-nodes-base.function",

"typeVersion": 1,

"position": [

340,

300

]

},

{

"parameters": {

"batchSize": 1

},

"name": "Split Sub Items",

"type": "n8n-nodes-base.splitInBatches",

"typeVersion": 1,

"position": [

410,

910

]

},

{

"parameters": {

"batchSize": 1

},

"name": "Split Items",

"type": "n8n-nodes-base.splitInBatches",

"typeVersion": 1,

"position": [

580,

300

]

},

{

"parameters": {

"conditions": {

"boolean": [

{

"value1": "={{$node[\"Split Sub Items\"].context[\"noItemsLeft\"]}}",

"value2": true

}

]

}

},

"name": "IF Subitems",

"type": "n8n-nodes-base.if",

"typeVersion": 1,

"position": [

830,

1160

]

},

{

"parameters": {

"conditions": {

"boolean": [

{

"value1": "={{$node[\"Split Items\"].context[\"noItemsLeft\"]}}",

"value2": true

}

]

}

},

"name": "IF Items",

"type": "n8n-nodes-base.if",

"typeVersion": 1,

"position": [

2000,

860

]

},

{

"parameters": {

"functionCode": "return [];"

},

"name": "End Function",

"type": "n8n-nodes-base.function",

"typeVersion": 1,

"position": [

2230,

840

]

}

],

"connections": {

"Start": {

"main": [

[

{

"node": "Set Global IDs",

"type": "main",

"index": 0

}

]

]

},

"Set Global IDs": {

"main": [

[

{

"node": "Get Custom IDs Function",

"type": "main",

"index": 0

}

]

]

},

"Execute Other Required Command": {

"main": [

[

{

"node": "Get Items Function",

"type": "main",

"index": 0

}

]

]

},

"Get Items Function": {

"main": [

[

{

"node": "Merge Items with IDs",

"type": "main",

"index": 0

},

{

"node": "Merge for Post-processing",

"type": "main",

"index": 0

}

]

]

},

"Merge Items with IDs": {

"main": [

[

{

"node": "Split Sub Items",

"type": "main",

"index": 0

}

]

]

},

"Merge for Post-processing": {

"main": [

[

{

"node": "Post-processing Command",

"type": "main",

"index": 0

}

]

]

},

"Slow Serial Function": {

"main": [

[

{

"node": "IF Subitems",

"type": "main",

"index": 0

}

]

]

},

"Dummy Function": {

"main": [

[

{

"node": "Merge for Post-processing",

"type": "main",

"index": 1

}

]

]

},

"Split Sub Items": {

"main": [

[

{

"node": "Slow Serial Function",

"type": "main",

"index": 0

}

]

]

},

"Get Custom IDs Function": {

"main": [

[

{

"node": "Split Items",

"type": "main",

"index": 0

}

]

]

},

"Split Items": {

"main": [

[

{

"node": "Execute Other Required Command",

"type": "main",

"index": 0

},

{

"node": "Merge Items with IDs",

"type": "main",

"index": 1

}

]

]

},

"IF Subitems": {

"main": [

[

{

"node": "Dummy Function",

"type": "main",

"index": 0

}

],

[

{

"node": "Split Sub Items",

"type": "main",

"index": 0

}

]

]

},

"Post-processing Command": {

"main": [

[

{

"node": "IF Items",

"type": "main",

"index": 0

}

]

]

},

"IF Items": {

"main": [

[

{

"node": "End Function",

"type": "main",

"index": 0

}

],

[

{

"node": "Split Items",

"type": "main",

"index": 0

}

]

]

}

},

"active": false,

"settings": {},

"id": "16"

}