Describe the problem/error/question

![]() Context

Context

I built an AI Agent in n8n that answers user questions using RAG (Retrieval Augmented Generation).

The goal is to ensure the agent replies strictly based on a Google Sheet that contains predefined Questions & Answers.

Architecture

Google Sheet → contains Q&A pairs (source of truth)

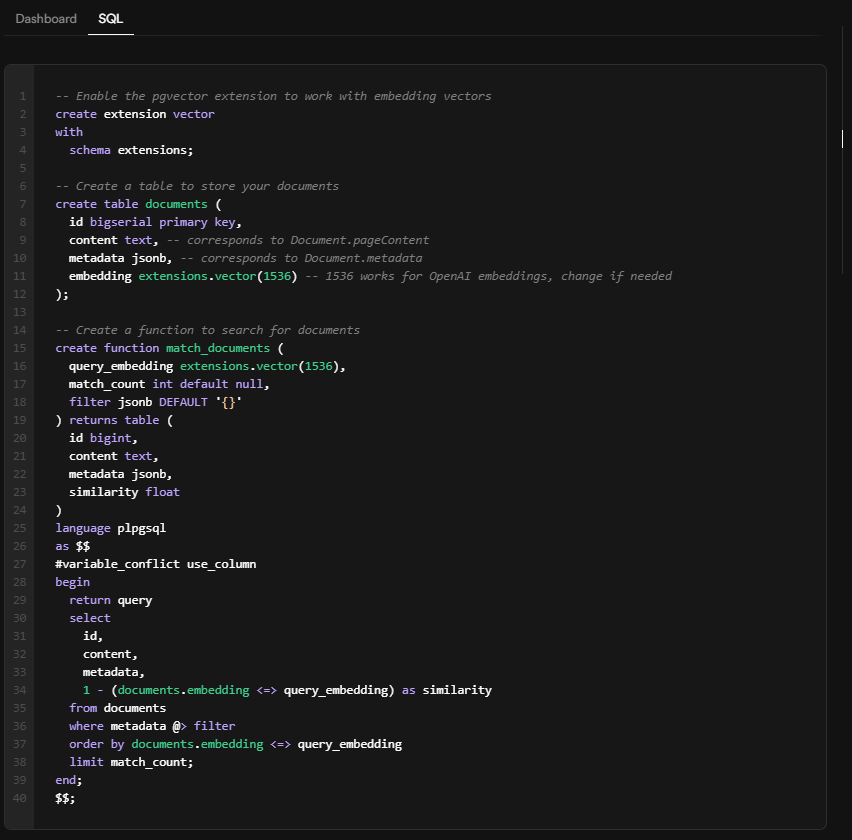

Data is embedded and stored in Supabase (pgvector).

n8n workflow:

User question

Embedding generation

Supabase Vector Store node → retrieve similar Q&A

AI Agent responds based only on retrieved results

![]() Expected Behavior

Expected Behavior

When a user asks a question similar to one in the sheet:

Supabase Vector Store node should return matching rows

Agent should respond using retrieved answer

![]() Actual Problem

Actual Problem

The Supabase Vector Store node returns no output, and no error is thrown.

However:

Running the same query directly in Supabase SQL returns 4 matching results.

This suggests the data exists and similarity search works.

![]() What I Verified

What I Verified

![]() Data exists

Data exists

Running SQL:

select * from documents

order by embedding ↔ query_embedding

limit 4;

returns expected rows.

![]() Embeddings exist

Embeddings exist

Embedding column is populated.

Dimensions match the model used.

![]() Query runs successfully in Supabase

Query runs successfully in Supabase

Manual query returns results.

![]() But in n8n:

But in n8n:

Node executes successfully

Output is empty ()

No error message

![]() Questions

Questions

What could cause the Supabase Vector Store node to return empty results while SQL returns matches?

How can I debug what query n8n actually sends to Supabase?

![]() Additional Details

Additional Details

Using Supabase pgvector

Using OpenAI embeddings

Table structure: id, content, embedding, metadata

No filters applied in the node

Node executes without errors

![]() Any guidance is appreciated!

Any guidance is appreciated!

Please share your workflow

Share the output returned by the last node

Information on your n8n setup

- n8n version: Version 2.3.2

- Database (default: SQLite): supabase

- n8n EXECUTIONS_PROCESS setting (default: own, main):

- Running n8n via (Docker, npm, n8n cloud, desktop app): n8n cloud

- Operating system: mac