I am trying to use N8N and Fireworks AI through the OpenAI Chat Model per the suggestion in this post back in Sept 2024.

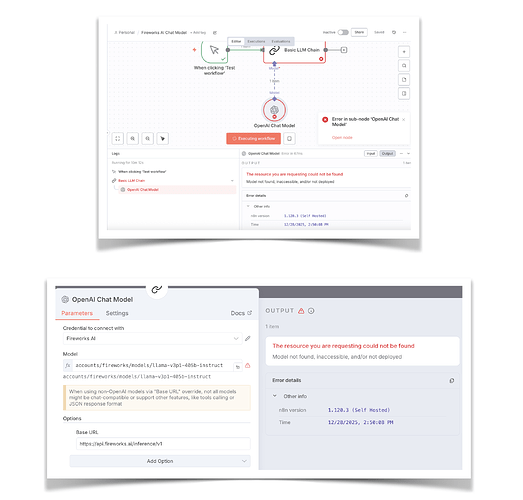

I copied the N8N workflow example given and substituted my Fireworks API Key, but I get a node error.

I am running N8N locally on my MacPro M2.

According to the API documentation, the endpoint should be differently from what you have setup:

Serverless queries (no deployment needed, not all models supported:

curl https://api.fireworks.ai/inference/v1/chat/completions -H “Content-Type: application/json” -H “Authorization: Bearer $FIREWORKS_API_KEY” -d ‘{“model”: “accounts/fireworks/models/deepseek-v3p1”,“messages”: [{“role”: “user”,“content”: “Say hello in Spanish”}]}’

Deployment API calls (you have to setup a deployment in fireworks prior to your request and use the deployment name in your request):

curl https://api.fireworks.ai/inference/v1/chat/completions -H “Content-Type: application/json” -H “Authorization: Bearer $FIREWORKS_API_KEY” -d ‘{“model”: “accounts/fireworks/models/gpt-oss-120b#<DEPLOYMENT_NAME>”,“messages”: [{“role”: “user”,“content”: “Explain quantum computing in simple terms”}]}’

Pro tip: When creating a HTTP request node in n8n, you can paste a curl command via the “Import cURL” button

I see what I am doing wrong.

I needed to copy the API key when it is first created and not the one that is in the listing afterward.

2 Likes