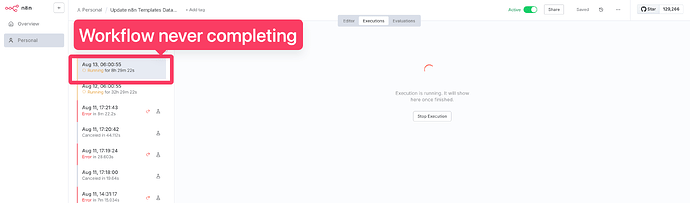

Describe the problem/error/question

- I run a workflow on scheduled trigger deployed in self-hosted server on Render

- This workflow consists in:

- getting data from an API by looping on page

- for each page, loop over the items to store to Supabase

- The data are correctly upserted to Supabase

- But, the workflows never completes resulting in unclosed connections and memory load on the server

What is the error message (if any)?

Please share your workflow

Share the output returned by the last node

Last node is a Loop over items

Information on your n8n setup

- n8n version: 1.105.4

- Database (default: SQLite): PostgreSQL 16

- n8n EXECUTIONS_PROCESS setting (default: own, main): main

- Running n8n via (Docker, npm, n8n cloud, desktop app): Docker on render

- Operating system: Linux srv-d1i24cumcj7s73eg1860-6fb6896788-qg9tx 6.8.0-1029-aws #31-Ubuntu SMP Wed Apr 23 18:42:41 UTC 2025 x86_64 Linux

More debug info —

instance information

Debug info

core

- n8nVersion: 1.105.4

- platform: docker (self-hosted)

- nodeJsVersion: 22.17.0

- database: postgres

- executionMode: regular

- concurrency: -1

- license: enterprise (production)

- consumerId: b042f870-50f9-4ca1-b952-1638ec1a0158

storage

- success: all

- error: all

- progress: false

- manual: true

- binaryMode: memory

pruning

- enabled: true

- maxAge: 336 hours

- maxCount: 10000 executions

client

- userAgent: mozilla/5.0 (macintosh; intel mac os x 10_15_7) applewebkit/537.36 (khtml, like gecko) chrome/138.0.0.0 safari/537.36

- isTouchDevice: false

Generated at: 2025-08-13T12:42:40.748Z