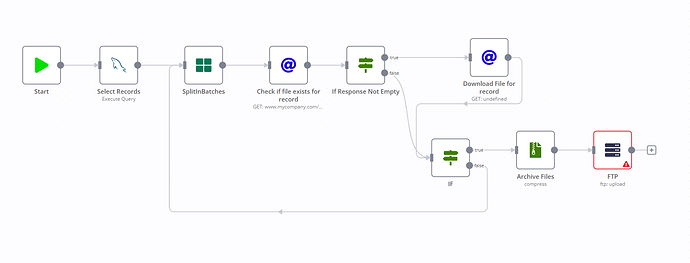

I have a workflow that I need some assistance with involving the need to archive(Zip) many files at once. I’ve included a mockup of what this would look like, but essentially records are added to a mysql table, and then at the end of the day this would select all of those records in the first node. There are usually a 2-300 records per day. Each record goes through the first http node to see if it has a file available for download. If it does, it downloads the file. Once all records are checked, it needs to archive all files into a single archive and upload the archive to an SFTP server via the FTP node.

I’m a little lost on the best way to do this. Has anyone done something like this before?

Hi @jhambach, depending on the file size this use case might not be suitable for being handled inside a workflow completely as you could easily hit memory limits. So my advise would be to also consider alternatives such as using the SSH or Execute Command nodes to download and zip a large number of files outside of n8n while still controlling the overall logic using n8n.

You can alleviate this to some extent by setting N8N_DEFAULT_BINARY_DATA_MODE=filesystem environment variable.

However, putting all memory considerations aside and looking purely at the logic, I don’t think you need a loop here. Downloading multiple files (if they exist) and then packing them into a single ZIP archive can be done like so:

This example workflows tries to download files from 3 different URLs of which only 2 work and then compresses them into a single ZIP file.

10 Likes

The memory usage is something I didn’t consider, and I think you may be right about that. I think the ssh / execute node may be good for this application.

However, I appreciate you showing how to zip multiple files, that will come in handy in some other workflows I need to build. As always, you the man @MutedJam!

1 Like

Glad to hear this helps

It might still work for your use case (depending on how large your files are and how much memory you have), so I’d say it is still worth a shot. Just keep an eye on the memory consumption when testing this.

1 Like

I think we have 16GB, it’s probably a few hundred megabytes of data total from all the files that would be archived.

To piggyback off of this, is it possible to zip a directory through the compression node? Or would I need to do that through the execute node?

So this would need the Execute Command node if you want your operating system to do it without having n8n read all files first.

If you want to use the Compression node you’d need to use the Read Binary Files node which can read all files in a directory before the Compression node.

1 Like

Hi all, anyone can help update the code for the new code node? There are 2 options now execute once for all and execute once for each items. Either one will have some errors in the above code. Thanks in advance!

Hi @leonardchiu  As this thread is over a year old, could you create a new one that fills out our template?

As this thread is over a year old, could you create a new one that fills out our template?  It would be really handy to have the workflow shared and know exactly what version of n8n you’re on.

It would be really handy to have the workflow shared and know exactly what version of n8n you’re on.