Hello, I have some workflows that perform queries on a scraper that is hosted on Heroku. As the scraper uses Puppeteer (chromedriver), one query must be made at a time, due to server resource limitations. Currently, using Make, I have the option to check one status at a time. The executions are in a queue and one only starts when the previous one ends. How can I do the same on N8N self hosted?

Hi @pedrofnts ![]()

I’m not too sure I’m clear on what you’re looking for - are you looking to check the status of an execution while the workflow is still being executed? ![]() Just want to make sure I’m on the same page!

Just want to make sure I’m on the same page!

Could you also let us know the following:

- n8n version:

- Database (default: SQLite):

- n8n EXECUTIONS_PROCESS setting (default: own, main):

- Running n8n via (Docker, npm, n8n cloud, desktop app):

- Operating system:

Hello. My CRM sends cards every day at 00:00 with the user’s data and the refund number to be checked. I need the workflow executions to be queued. One only starts when the previous one ends.

- n8n version: 1.8.2

- Database (default: SQLite): default

- n8n EXECUTIONS_PROCESS setting (default: own, main): default

- Running n8n via (Docker, npm, n8n cloud, desktop app): Docker

- Operating system: Ubuntu 22.04

Hi @pedrofnts ![]() Thanks for clarifying - you could use something like RabbitMQ to do this

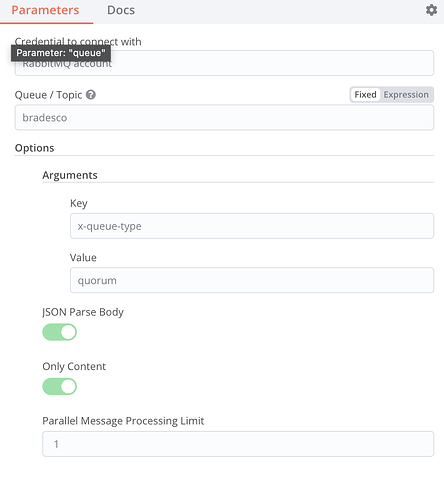

Thanks for clarifying - you could use something like RabbitMQ to do this ![]() What you can do is send all requests straight to RabbitMQ and then process one by one from that queue. The RabbitMQ trigger has a parameter to choose the number of parallel executions, and for your use-case, you can set it to 1

What you can do is send all requests straight to RabbitMQ and then process one by one from that queue. The RabbitMQ trigger has a parameter to choose the number of parallel executions, and for your use-case, you can set it to 1 ![]()

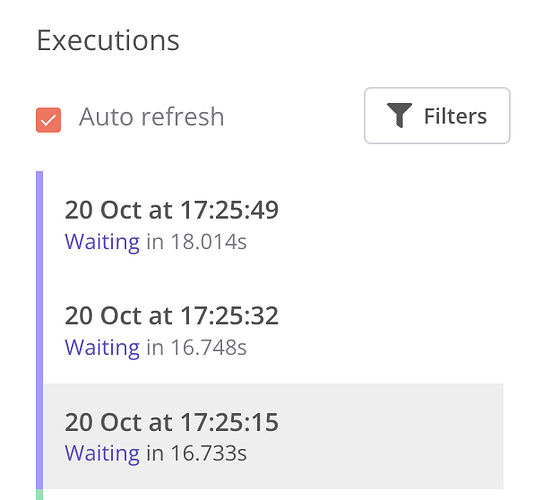

Hello. When a execution goes into “Wait” in N8N, the RabbitMQ trigger process the others. That’s not what I expect. Is there anyway to fix that?

Hi @pedrofnts ![]() I had a test without a wait node as well as with a longer wait node (as behaviour with a wait node of 65 seconds or more would be different), and saw the same behaviour as you. As there wouldn’t be a way in the trigger node to change how this works, I’m afraid that this is a feature request

I had a test without a wait node as well as with a longer wait node (as behaviour with a wait node of 65 seconds or more would be different), and saw the same behaviour as you. As there wouldn’t be a way in the trigger node to change how this works, I’m afraid that this is a feature request ![]() I’ll move this over to the right section of the forums - don’t forget to upvote as well!

I’ll move this over to the right section of the forums - don’t forget to upvote as well!

For the moment, I wouldn’t be able to think of a workaround, either ![]()

This topic was automatically closed 90 days after the last reply. New replies are no longer allowed.