I want to install and extra module, and if I understand correctly from the docs, I need to:

- install the extra module

- ad an environment variable NODE_FUNCTION_ALLOW_EXTERNAL=modulename

What is not (yet) clear to me, is:

- whether I’ve got to install the extra module globally or inside n8n/node_modules folder?

- how/where to ad an environment variable. I tried

export NODE_FUNCTION_ALLOW_EXTERNAL=modulename but nothing happens

I’m obviously overlooking something very simple, but I don’t know what it is … yet

I have, however, managed to get this working in both the Desktop App and Docker, so with your help, I’m sure I’ll get this working too …

BTW: I installed n8n via ‘npm install n8n -g’ and it is located in /opt/homebrew/lib/node_modules/n8n

Hey @dickhoning, I think if both your module and n8n are globally installed, simply running something like export NODE_FUNCTION_ALLOW_EXTERNAL=modulename && n8n should do the job.

I haven’t tried this first hand as I am using the cloned Git repo on my machine. So just for reference and in case anyone wants to try my exact approach out, the below steps worked for me (assuming all dev setup steps are complete):

git clone [email protected]:n8n-io/n8n.git

cd n8n

npm install hello-world-npm

lerna bootstrap --hoist

npm run build

export NODE_FUNCTION_ALLOW_EXTERNAL=* && npm start

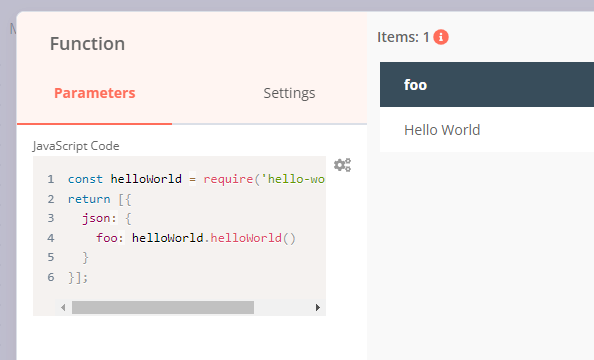

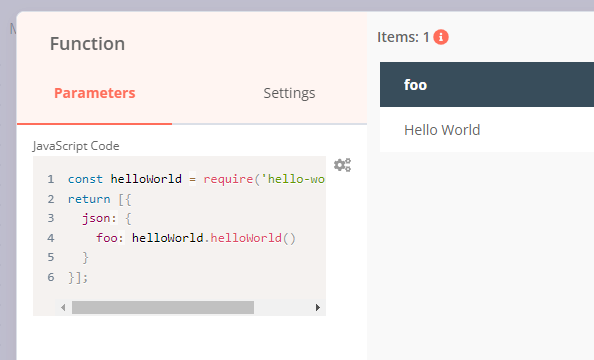

Afterwards, I could use this dummy module in n8n inside a Function node using code like this:

const helloWorld = require('hello-world-npm');

return [{

json: {

foo: helloWorld.helloWorld()

}

}];

1 Like

this happens on my macOS Monterey Silicon …

ecxs@developmentecxscom ~ % export NODE_FUNCTION_ALLOW_EXTERNAL=nodemailer && npm start

npm ERR! Missing script: "start"

npm ERR!

npm ERR! Did you mean one of these?

npm ERR! npm star # Mark your favorite packages

npm ERR! npm stars # View packages marked as favorites

npm ERR!

npm ERR! To see a list of scripts, run:

npm ERR! npm run

npm ERR! A complete log of this run can be found in:

npm ERR! /Users/ecxs/.npm/_logs/2022-02-24T10_09_39_760Z-debug-0.log

ecxs@developmentecxscom ~ %

Hey @dickhoning, the example would only work if you clone the n8n repository and run npm start in there.

For the scenario you have described (and assuming both your module and n8n are installed globally) you should be able to run export NODE_FUNCTION_ALLOW_EXTERNAL=modulename && n8n instead (or export NODE_FUNCTION_ALLOW_EXTERNAL=* && n8n to allow all external modules rather than a specific one).

Hi @MutedJam thanks again! I’ve got it working  … and here’s my findings on testing my mail parsing workflow with the different n8n flavours:

… and here’s my findings on testing my mail parsing workflow with the different n8n flavours:

-

Desktop App: gets extremely slow when having to pass bigger chunks of data/text between nodes

-

Docker: is crashing under the same conditions. Probably Docker for macOS Silicon is not working properly yet. It actually crashes, and when you assign more member to Docker, it can even crash your macOS, which in my experience is quite exceptional.

-

System / npm: this seems to work fine fine.

In each scenario, there’s an issue with the database. In my case, each time I’m sending a ~ 4 MB text file to the webhook, the database size increases with 44 MB !!! And if I figure out correctly, that’s because a record gets created in the executions table for each node and the data gets stored as well. In my case this is 10x 4 MB.

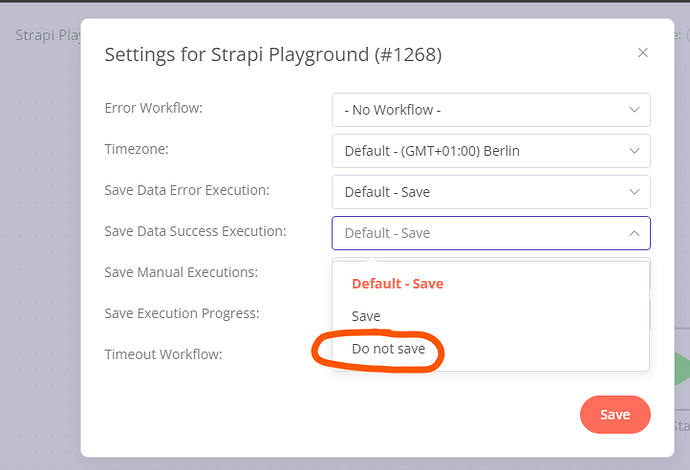

Long story short: I think my workflow could work if there would be an option to not store (all of) the data in the executions table.

1 Like

some additional info … I should have RTFM first

Apparently, there are some EXECUTIONS_DATA_SAVE_ options.

However, I tried with export NODE_FUNCTION_ALLOW_EXTERNAL=mailparser && export EXECUTIONS_DATA_SAVE_ON_PROGRESS off && n8n but nothing changed …

That’s some solid investigation work right there!

Assuming not all your workflows have such massive amounts of JSON data, you could try applying this setting on a workflow level through the UI:

The environment variable EXECUTIONS_DATA_SAVE_ON_PROGRESS would require a value of false (but this should already be the default), EXECUTIONS_DATA_SAVE_ON_SUCCESS would need EXECUTIONS_DATA_SAVE_ON_SUCCESS=none.

1 Like

Hidden on the surface  … and uh … after resetting the EXECUTIONS_DATA_SAVE_ON_ settings via:

… and uh … after resetting the EXECUTIONS_DATA_SAVE_ON_ settings via:

export NODE_FUNCTION_ALLOW_EXTERNAL=mailparser && EXECUTIONS_DATA_SAVE_ON_ERROR=all && EXECUTIONS_DATA_SAVE_ON_SUCCESS=all && EXECUTIONS_DATA_SAVE_ON_PROGRESS=false && n8n

Everything appears to work fine and node is hardly using any CPU. So my conclusion is: with Do no Save Data Success Execution you avoid your database from blowing out of proportion as well as put less strain on your CPU.

1 Like

Awesome, glad to hear this works!

![]()