In standard LangChain code, it’s possible to pass additional parameters through a Basic LLM Chain node using code like this:

from langchain.chat_models import ChatOpenAI

from langchain.prompts.chat import (

ChatPromptTemplate,

HumanMessagePromptTemplate,

SystemMessagePromptTemplate,

)

from langchain.schema import HumanMessage, SystemMessage

import os

os.environ["OPENAI_API_KEY"] = "anything"

chat = ChatOpenAI(

openai_api_base="http://0.0.0.0:4000",

model="zephyr-beta",

extra_body={

"fallbacks": ["gpt-3.5-turbo"]

}

)

messages = [

SystemMessage(

content="You are a helpful assistant that im using to make a test request to."

),

HumanMessage(

content="test from litellm. tell me why it's amazing in 1 sentence"

),

]

response = chat(messages)

print(response)

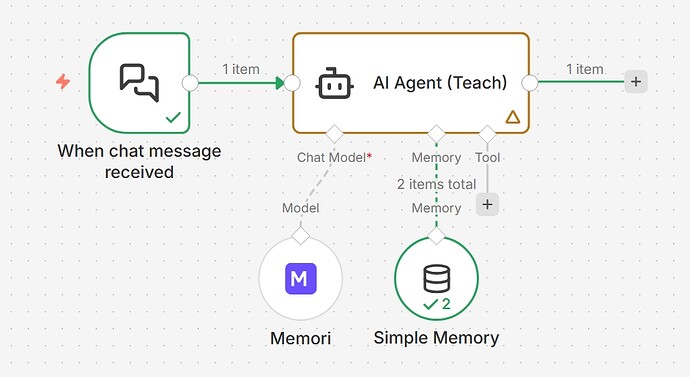

I’d like to be able to pass in arbitrary JSON within the extra_body parameter within the OpenAI Chat Model node within an n8n workflow, but it seems the current node doesn’t support this functionality. Can we please get this additional parameter added to this node? Thanks!

Ref: