We have two topics open about a serious problem in node postgres so far without solution, error reported by at least 5 people, I am very grateful for n8n, but is there any interest in solving this situation?

Hey @admdiegolima,

We aim to fix bugs rather than leave them, this can be seen in one of the threads that you have linked to which lead to the new one being created as we have fixed a similar issue once already.

In the second post I am still not able to reproduce this which is a bit of an issue and I am still looking for a workflow that can reproduce the problem.

At the moment I have had a workflow running for over a week that runs a couple of database queries every couple of minutes with no luck, do you happen to have a flow that reproduces the issue?

I did also notice that this was also fixed by one user by tweaking the settings on the Postgres server.

Hello, sorry to open another topic, but, as the others had a certain time without anyone from the N8N team speaking, I believed it had been forgotten.

About messing with the postgres settings in the .env as mentioned in the last message in the topic below, it didn’t work for me.

Anyway, I’m going to share as much information as possible here so that it can help with the diagnosis.

1 - This problem happens since before version 1.x

2 - Use N8N in Queue mode

3 - Use Docker Swarm

4 - Postgres stack after changing settings.

postgres pós alteração na configuração · GitHub

5 - Postgres stack before changing settings.

gist:da51db852888dffdc5a4d0cc2b120299 · GitHub

6 - Stack of my N8N

gist:6396b5599909b7abfd84ccfedf0b47c3 · GitHub

7 - If you click on “Retry with original workflow (from node with error)” the flow that gave error works perfectly.

8 - The error happens in complex flows and in Simple flows

9 - The higher the “retry On Fail” the smaller the number of times the error happens, but even using the maximum 5/5000 the error still persists in happening.

My inclination is that this a a postgres issue - my server is more stable now, but not perfect - but I also get the same error on my much more powerful odoo server and highly optimised (though via the odoo node, not the postgres node).

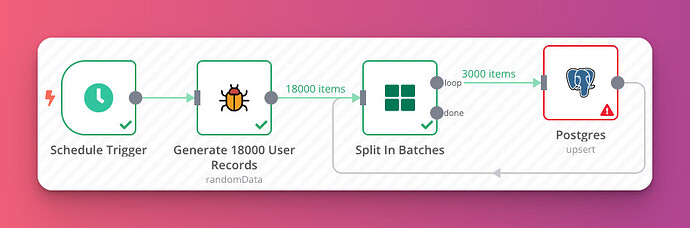

If you want to reproduce it yourself, it seems to flare up when I’m pushing large amounts of data, but in small chunks. It’s definitely intermittent, and a combination of tweaking the postgresql server / setting all my postgres nodes in this kind of situation to “retry” seems to give it enough chances to get through.

Ie:

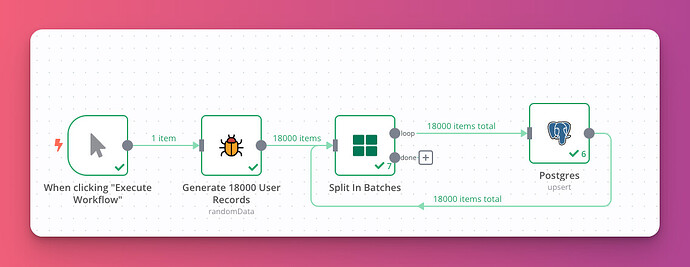

18k lines of data (either low numbers of fields or high)

Split in batches - 3000 per loop

Postgres (insert)

Generally at least one of those inserts will fail for me, unless I use retry, in which case it’s getting through on most runs.

Morning,

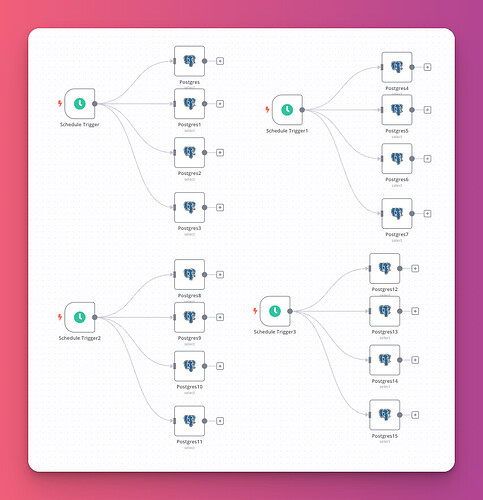

It looks like there are different causes, I suspect the issue is down to a mix of things and the configuration of postgres to be able to handle the connections is possibly one of them. At the moment my test workflow looks like the below, Each trigger runs every 30 seconds and the select query pulls out all the data from my n8n execution history which is a couple of hundred records.

@admdiegolima when you see the error is it always when you do a retry? While we may not reply to an issue it doesn’t mean we are not looking typically we are in the background trying to reproduce it and looking for that key bit of info that is missing.

@Luke_Austin that is useful info and would point to there being something in Postgres but I can give your worklfow idea a bash later today to see if it triggers the same error.

@Luke_Austin as a quick test this is the workflow I now have which which I will be using in queue mode.

I guess in theory it should just be a case of scheduling it and seeing what happens. For science the workflow is below, The Postgres database I am using is an unmodifed Postgres 14 container

This has an internal id of NODE-728

I don’t know if I understood the question correctly, but the error here happens with retry on fail or not retry on fail makes the voice lower.

also if a flow fails and i run a repeat of it manually it works correctly.

I spent the whole Saturday trying to reproduce the error so that I could send you for tests, in my case it doesn’t need many items to give the error, many times it fails with only 1 item.

I don’t know if there’s any connection, but I noticed that when I sent the data from the postman to the n8n, the number of errors suffered dropped to less than 1%, already if I sent from one stream from n8n to another from http request to webhook it happens in 50 % of times without retry on fail enabled.

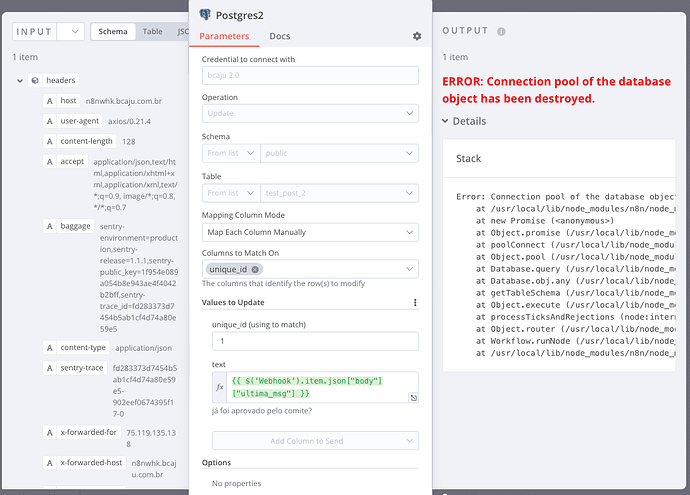

I made a new table in postgres with only 2 columns and node in n8n only updated one.

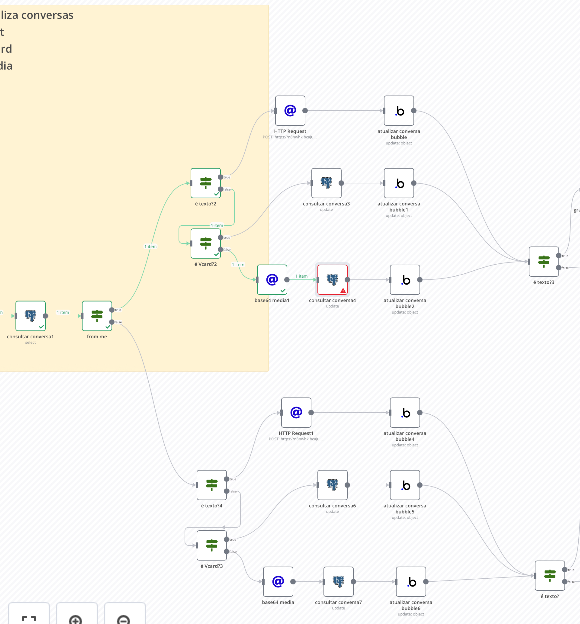

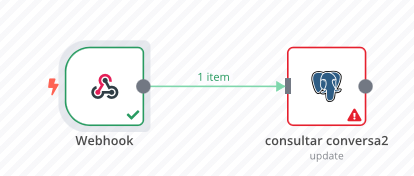

I was able to reproduce the error even sending it through postgres, setting up the flow as follows.

I don’t know what the connection is, but when I put it that way with multiple “if” nodes, it happened a lot more often.

I thought it would.

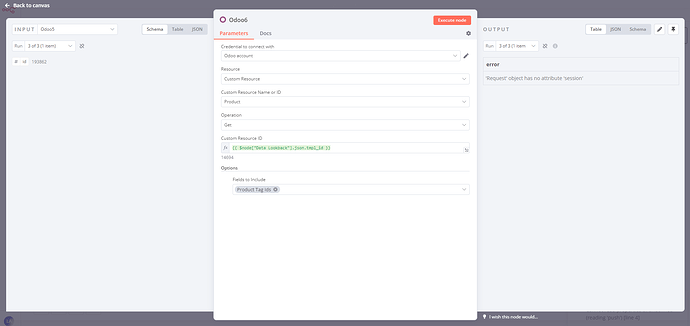

I’m seeing the same (or similar) behaviour on my Postgresql based Odoo implemetataion -

Error code there is:

ERROR: ‘Request’ object has no attribute ‘session’

‘Request’ object has no attribute ‘session’

Which seems to traceback to the same issue. Intermittent too - my research can’t see a documented bug on the Postgres side, but I’m still inclined to say it’s there, not on n8n’s behalf.

A fix for this can be found in the PR below and this will be released soon.

@Jon - so it was an error on the n8n end?

Is this a fix that will port over to the Odoo node (if it is indeed the same issue) - or as it’s passing through the Odoo api before postgres, is it something i can pass over to the Odoo team?

Hey @Luke_Austin,

Yeah it looks like in some cases we were not correctly closing the connection, This change will only be for Postgres based nodes. Odoo is based on HTTP requests so will likely be something else.

Ah, so nothing to do with n8n in that instance - I’ll suggest to Odoo tech support that their API might be doing the same, and they’ll ignore me because I’m self hosting.

Thus is the way of the world… Thanks for actioning the fix on Postgresql anyway!

New version [email protected] got released which includes the GitHub PR 7074.

This topic was automatically closed 7 days after the last reply. New replies are no longer allowed.