BUG ?

I set up a wokflow timeout, expecting that executions would would no longer last more than the timeout but still have example of execution lasting longer than the timeout.

As you can seen in this screenshot the timeout of the whole workflow setting is 30 seconds:

But in the same time the following workflow shows an example of one specific node (part of the parent workflow) got the chance to reach it’s own timeout which is 300 seconds.

I would have expect this workflow to kill itself after 30 seconds. Not letting the nodes inside to keep going.

Question

What is the expected behaviour of workflow time out ? => I would have guessed it thrown an error, killing the current exec. But it doesn’t seem to work that way.

Thanks you all for the great work done here

Information on your n8n setup

-

n8n version: 0.193.5

-

Database you’re using (default: SQLite): Postgresql

-

Running n8n with the execution process [own(default), main]: Worker mode

-

Running n8n via [Docker, npm, n8n.cloud, desktop app]: Kubernetes onprem installation

Hi @William_Guerzeder, afaik the workflow timeout is only checked between nodes (similar to the behaviour when you manually stop an execution).

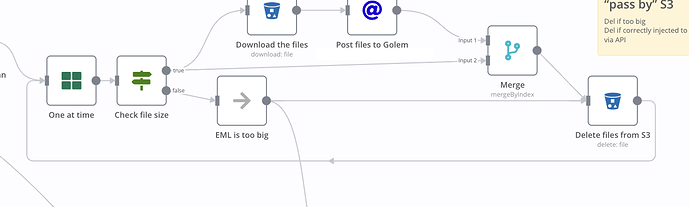

Assuming the operation is slow because you are deleting a large number of files, you could consider breaking down your data into smaller batches using the Split In Batches node. So n8n would check whether the workflow timeout has been reached after each batch rather than after processing all items.

Consider these two examples:

No batching

This workflow will only be stopped once all items have been processed.

Batches of 10

This workflow can be stopped after each 10 items processed, like so:

Hope this helps!

Thx you very much for your answer.

Ok now i get to understand better why it keeps lasting for longer time than the defined timeout.

I had the idea of slit in batch already .

But sometime, you know, one node last for ever because something went wrong and that’s what happended with my “delete file” node.

It’s disappointing that i can’t easily implement the “Fail fast and retry” principles setting up timeouts.

Thanks a lot for you time i’ll take a look to check how to works around the S3 default timeout using the HTTP node as you advice it to me in my other ticket.

Cheers have a great day .

William

No worries @William_Guerzeder, I can see how frustrating this is. Perhaps you might want to raise separate feature requests here on the forum that would address your pain points (something like “have timeout interrupt running node execution” and “add timeout setting to all nodes”)? This would allow other users to have their say on this and allows the team to consider implementing this going forward.